Blog Posts

Example Case: Using DevSecOps to Redefine Minimum Viable Product

This SEI blog post, authored by SEI interns, describes their work on a microservices-based software application, an accompanying DevSecOps pipeline, and an expansion of the concept of minimum viable product …

• By Joe Yankel

In DevSecOps

Acquisition Archetypes Seen in the Wild, DevSecOps Edition: Clinging to the Old Ways

This SEI blog post draws on SEI experiences conducting independent technical assessments to examine problems common to disparate acquisition programs. It also provides recommendations for recovering from these problems and …

• By William E. Novak

In DevSecOps

Extending Agile and DevSecOps to Improve Efforts Tangential to Software Product Development

The modern software engineering practices of Agile and DevSecOps have revolutionized the practice of software engineering. This blog post explores use of these practices in capability delivery and business mission.

• By David Sweeney, Lyndsi A. Hughes

In DevSecOps

5 Challenges to Implementing DevSecOps and How to Overcome Them

The shift from project- to program-level thinking raises numerous challenges to DevSecOps implementation. This SEI Blog post articulates these challenges and ways to overcome them.

• By Joe Yankel, Hasan Yasar

In DevSecOps

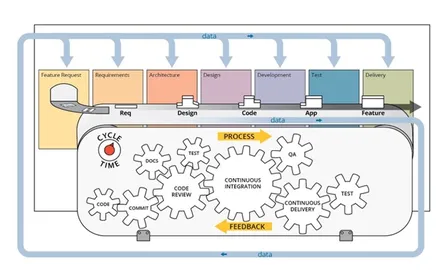

Actionable Data from the DevSecOps Pipeline

In this blog post, we explore decisions that program managers make and information they need to confidently make decisions with data from DevSecOps pipelines.

• By Bill Nichols, Julie B. Cohen

In DevSecOps

Writing Ansible Roles with Confidence

How do you write Ansible roles in a way where you can be confident your role works as intended? This post provides guidance on how to best begin developing Ansible …

• By Matthew Heckathorn

In DevSecOps

A Technical DevSecOps Adoption Framework

This blog post describes our new DevSecOps adoption framework that guides the planning and implementation of a roadmap to functional CI/CD pipeline capabilities.

• By Vanessa B. Jackson, Lyndsi A. Hughes

In DevSecOps

Combining Security and Velocity in a Continuous-Integration Pipeline for Large Teams

This post explores how one team managed—and eventually resolved—the two competing forces of developer velocity and cybersecurity enforcement by implementing DevSecOps practices.

• By Alejandro Gomez

In DevSecOps

Modeling DevSecOps to Protect the Pipeline

This blog post presents a DevSecOps Platform-Independent Model that uses model based system engineering constructs to formalize the practices of DevSecOps pipelines and organize guidance.

• By Timothy A. Chick, Joe Yankel

In DevSecOps

From Model-Based Systems and Software Engineering to ModDevOps

Introduction to ModDevOps, an extension of DevSecOps that embraces model-based systems engineering (MBSE) technology

• By Jerome Hugues, Joe Yankel

In DevSecOps