7 Quick Steps to Using Containers Securely

The use of containers in software development and deployment continues to trend upwards. There is good reason for this climb in usage as containers offer many benefits, such as being lightweight, modular, portable, and scalable, all while enabling rapid and flexible deployments with application isolation. However, as use of this technology increases, so does the likelihood that adversaries will target it as a means to compromise systems. Such concerns are amplified in organizations where technical staff are implementing containers on-the-fly (i.e., deploying containers while simultaneously still learning about them). To help software developers deploy containers more securely, this blog post provides seven quick steps to engineer security into ongoing and future container adoption efforts.

Container Overview

Because this guidance will appeal to many who are still novice container users, we should begin with a brief overview of how containers work. For more detailed information on container architecture, read this article. In simple terms, we define application containers as

a packaged bundle that includes executable application software code, the software dependencies for the application, and the hardware requirements needed to run the application. These are all wrapped into a single, self-contained unit.

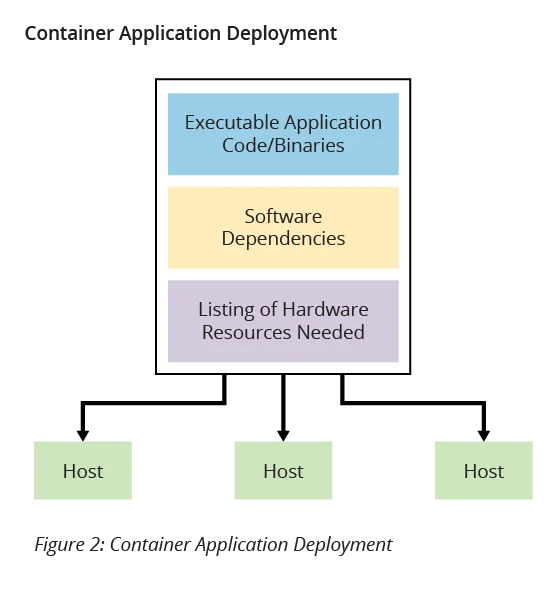

Traditional delivery of software applications involves providing only the first element-the executable software code-and leaves the remaining two elements to be dealt with during software installation and deployment. Containers, by contrast, include with application software all of the elements needed to deploy and run the application. Application containers thus address the problem of how to get software to run reliably when moved from one computing environment to another.

For example, in a typical software development environment, development begins on a developer's laptop, then moves to a test environment, then to a quality assurance (QA) environment, and finally to a production environment. Often, each of these environments varies in hardware, configurations, operating system versions, and other areas. These variations can then lead to differences in application behavior between environments, even when running identical copies of the same application code. Application containers abstract away the impact of these hardware differences by providing the hardware requirements (CPU, RAM, etc.) needed to run, without giving concern to how the requirements are satisfied, only that each environment provides the same prescribed resources.

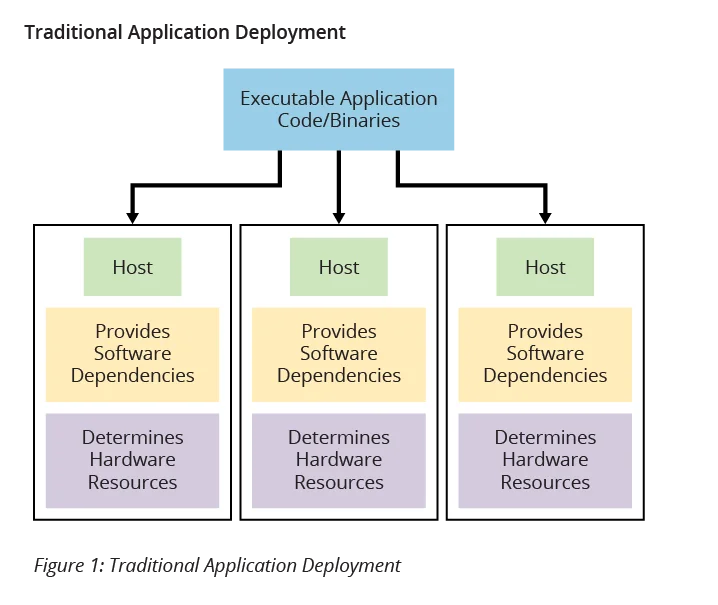

Moreover, application containers also eliminate common issues related to dependencies in software applications. Application code often relies on other libraries and components, known as dependencies, for full execution because these dependencies provide functionality incorporated into the application code. In a traditional application deployment, as shown in Figure 1 below, these software dependencies must be emplaced separately in each application environment, which often leads to version differences in the environments as they are maintained and updated separately. Conversely, when using application containers (Figure 2), all software dependencies are included in the containers and thus are identical in all places where the application is deployed.

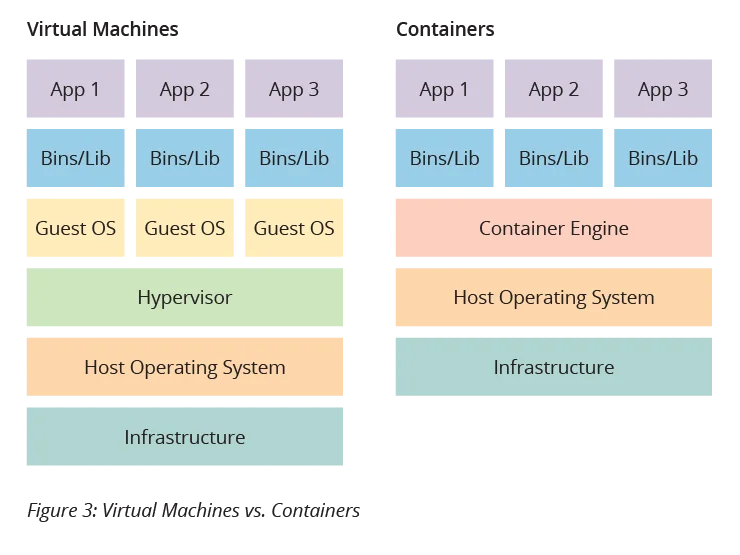

The concept of deployed applications being packaged with the resources they need to execute often invokes comparisons to virtualization and virtual machines (VMs). Certainly, there are many similarities between the approaches. However, there are some key differences that distinguish the two technologies. The most fundamental difference is that when using VMs, the package being delivered for deployment includes an entire operating system in addition to the application software and dependencies. In containerization only the application software and dependencies are in the deployment package, along with resource requirements specification. For example, four copies of an application deployed in a VM onto a single host would require four instances of the operating system to run on the host, one for each application instance. On the other hand, four copies of an application deployed in a container onto a single host would require only one instance of the operating system to run on the host, with host resources allocated to each container instance via the container engine. Figure 3 illustrates these concepts.

It should not be inferred that using containers is "better" than using VMs. The appropriateness of each is context-dependent and the technologies are even used together at times. It is, however, important to understand the strengths and limitations of each approach, including security concerns. The remainder of this post details seven quick steps for using containers securely.

Use Available Security Resources

Fortunately, a growing amount of guidance and number of tools for container security are materializing in this field. The first step to using containers securely is to review and understand the resources on this subject that are freely available. An excellent starting point is to review the National Institute of Standards and Technology (NIST) Application Container Security Guide. This document explains security concerns associated with container technologies and makes recommendations for addressing those concerns. Some highlights from this guide include

- tailoring the organization's operational culture and technical processes to support the new way of developing, running, and supporting applications made possible by containers.

- using container-specific host operating systems instead of general-purpose ones to reduce attack surfaces.

- only using group containers with the same purpose, sensitivity, and threat posture on a single host OS kernel to allow for additional defense in depth.

- adopting container-specific vulnerability management tools and processes for images to prevent compromises.

- considering the use of hardware-based countermeasures to provide a basis for trusted computing.

- using container-aware runtime defense tools.

Another great resource to review is Docker's security guide. Of particular interest is the CIS Docker Benchmark, referenced under the Policy section of the guide. There are even scripts available on GitHub that can be used to assess systems against this CIS Docker Benchmark. Moreover, the article Docker Reference Architecture: Securing Docker EE and Security Best Practices provides specific and actionable recommendations that developers can follow when building their containers. An established workflow paradigm is to follow the architecture guide and run the CIS Docker Benchmark script to verify that the objectives are being met. When this process is complete, it is common to run the containers through OpenSCAP next.

OpenSCAP provides information on security policies and standards, as well as tooling to help assess, measure, and enforce the security baselines that the policy documents prescribe. In addition to OpenSCAP, there are similar commercial tools specifically designed to help with the process of securing containerized infrastructure. We recommend that developers gain some proficiency with CIS Docker Benchmark and Open SCAP first and then evaluate which commercial tools may strengthen the process. While CIS Docker Benchmark and the Docker security guide are obviously tailored to Docker, the concepts can be applied to other container technologies, and their use makes for a fine learning exercise. OpenSCAP can be used on many container technologies. While we do not endorse specific tools, other popular open source tools to consider for evaluation include Clair, Anchore, Grafeas, Cilium, and Notary.

Rebuild Regularly

Best practice dictates that regular maintenance for traditional software deployments must involve periodic modifications to install security updates. Many solutions exist to ensure that these updates take place, from simple scheduled jobs to configuration management tools. In all cases, these modifications are done in place, on persistent infrastructure that is rarely built from scratch.

Containers, by contrast, execute on top of images that are immutable once built. A small amount of ephemeral storage is used to provide scratch space for running applications, but persistent changes can be done only when the image is built. The need for timely security updates is no less real for containers, however, so images must be rebuilt from scratch on a regular basis. Specifically, rebuilds must happen at least as frequently as the update rate required by security policies.

To minimize the cost of this rapid, continuous maintenance, these periodic updates should be automated through the implementation of a DevSecOps continuous integration/continuous deployment (CI/CD) pipeline. As updates become available, this pipeline automatically tests and redeploys new images to operational environments. Containers used in production without an automated pipeline for updates will inevitably become insecure or an expensive drain on engineering time.

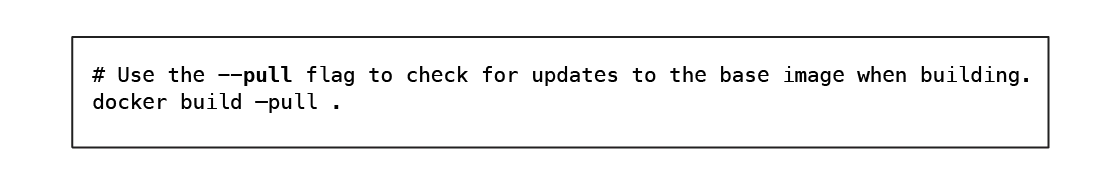

When rebuilding images, care should be taken to ensure that the container engine is attempting to update the base image. If new images are not fetched, the rebuild will have no effect, and the image will remain out of date. For example, the most popular container engine, Docker, will update local cached images only when explicitly forced to do so with a command line flag or explicit issue of a command to fetch the latest copy.

Secure the Image Supply Chain

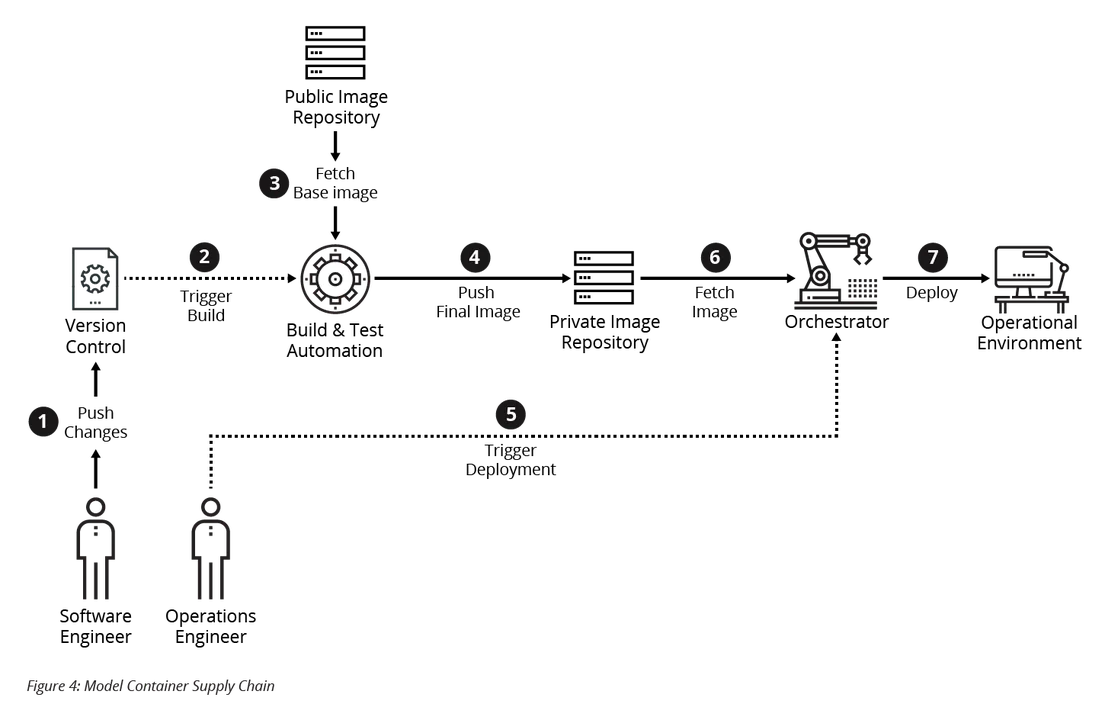

To build container images at the pace required to keep up with critical security updates, it is necessary to construct a secure supply chain that can operate as efficiently as possible. Take care, however, to ensure that security risks associated with this automation are managed properly. To help make some of these security concerns more concrete, let's consider a model of a DevOps pipeline as shown in Figure 4 below. This model pipeline is configured to automatically build, test, and publish images to a private image repository when one of the following two events takes place:

- Software engineers commit their changes to a version control system (e.g., Git).

- The public repository is updated with a new version of the base image.

Periodically, an operations engineer--who may also be part of the development team--instructs the container orchestrator (e.g., Kubernetes, Docker Swarm) to perform a deployment of the latest image to an operational environment. While this process could be entirely automated, for some applications and environments, communication with stakeholders must occur beforehand.

When using such a pipeline, there are several attack vectors to consider:

- Application and configuration vulnerabilities. A software engineer--either inadvertently or maliciously--pushes vulnerable code to version control. The build process automatically builds this code and sends it downstream. If it is not caught, the change will be rapidly deployed to the testing and production environments.

- Insecure version control. Distributed version control systems (e.g., Git) do not have a true centralized system of record. If version control systems are not properly configured, those with access can spoof changes under the identity of another principal, tamper with the history directly to inject changes surreptitiously, or repudiate the origin of changes already within version control.

- Tainted base image. The upstream source for base images is compromised and a malicious base image is provided. The build automation process will automatically fetch and build a new image based on this vulnerable image. Unless detected, the newly built image may then be deployed to operational environments. When incorporating images received from third parties into the supply chain, ensure they are from trusted sources only and their integrity has been verified.

- Insecure build automation environment. Malicious actors exploit access to the build automation environment to tamper with images before they are pushed to the internal repository. Depending on their level of access to the environment, controls implemented before or during the build automation process could be circumvented.

- Insecure private image repository. A malicious actor modifies an image in the private repository and pushes a tainted image to the private repository. The malicious image is automatically deployed to the testing environment and eventually production.

- Insecure orchestrator. Container orchestrators (i.e., Kubernetes and Docker Swarm) are in complete control over operational environments and are attractive targets for attackers. For this reason, attackers must be prevented from gaining access to accounts with access to applications running on the orchestrator.

Protect the Container Hosts

All the steps discussed so far would be moot with insecure container hosts. The principle of least privilege suggests that containers should be given exactly the privileges that they require to perform their tasks. By default, however, containers run processes as the superuser (i.e., root). Despite this apparent violation of the principle of least privilege, hosts running containers can still be secure, thanks to the kernel feature capabilities. (See here and here for more information.) Modification of capabilities allows the root user within the container to be significantly less privileged than the root user running outside the container.

There are several important considerations for maintaining security of the container hosts. First, container hosts should not be used for any other purpose. This approach both reduces the number of users who need access to container hosts and helps minimize the attack surface of the host by minimizing the number of packages required by the host. Avoid adding capabilities to containers whenever possible, and never use flags like Docker's privileged flag on container hosts. This flag should only be used in development environments for debugging issues with capabilities. Finally, carefully consider local mount points to ensure they do not contain sensitive files or directories from the host (e.g., /etc/passwd). Ideally, local mounts would be avoided completely in favor of other safer options offered by the orchestrator.

Distribute Secrets Securely

Credentials and private keys should never be built directly into containers due to the immutability of image layers. Even if these files were deleted in a later image, they would still be retrievable from the earlier image layers. However, storing these credentials on volumes makes secure management of credentials and keys hard. For this reason, use the secret storage mechanisms provided by the container orchestrator to insert these secrets into containers at runtime.

Configure Resource Limits

Attackers who are unable to achieve privilege escalation may opt to sow chaos by performing denial-of-service attacks. To prevent successful attacks on vulnerable containers from impacting other containers running on the same host, flags provided by the container engine should be used to limit the CPU, memory, and disk I/O. Failure to set these limits to reasonable values could allow an attacker to bring down the entire container host with a vulnerability in a single container.

Persistent Logging

Effective logging is an essential component of maintaining security in production environments, and high-quality, trustworthy logs are critical during incident response. Unfortunately, the ephemeral nature of containers complicates the process of collecting logs. To work around this, containers should send their logs to a centralized logging server, or they should be copied from the container periodically. Moreover, the container host should be configured to track the starting, stopping, crashing, and other activity involving containers on the host. Finally, the build automation should preserve logs of all input image hashes and the final output hashes for built images.

Looking Ahead

Following the seven steps outlined here provides a tremendous starting point for ensuring that security is given full consideration during containerization efforts. Security for containers is not just one thing and is not a binary operation. In other words, it's not as if your containers are secure or not since security is a sliding scale.

If organizations take more steps to address security-related activities now, they will be less likely to encounter security incidents in the future. When it comes to application containers, security is achieved through following and adopting a series of best practices and guidelines. Some basic principles are outlined here to get started on the right foot, but approaches to container security should continue to evolve as the technology matures.

References and Additional Resources

Read the SEI Blog post Virtualization via Virtual Machines.

[1] Gartner: 3 Critical Mistakes That I&O Leaders Must Avoid With Containers. (Available by subscription only)

[3] NIST Special Publication 800-190, Application Container Security Guide, September 2017.

[4] Docker, Inc., "Docker Security" [Online]. Available: https://docs.docker.com/engine/security/security/ [Accessed 4 March 2019]

[5] Docker, Inc., "Docker Reference Architecture: Securing Docker Enterprise and Security Best Practices" [Online]. Available: https://success.docker.com/article/security-best-practices

[7] Kerrisk, M., "Linux Programmer's Manual - CAPABILITIES(7)," 2002 [Online]. Available: http://man7.org/linux/man-pages/man7/capabilities.7.html [Accessed 4 March 2019].

[8] Red Hat, Inc., "Image Fetching Behavior" [Online]. Available: https://coreos.com/rkt/docs/latest/image-fetching-behavior.html [Accessed 4 March 2019].

More By The Authors

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed