Model-Based Analysis of Agile Development Practices

Applications of Agile development practices in government are providing experience that decision makers can use to improve policy, procedure, and practice. Behavioral modeling and simulation (BModSim) techniques (such as agent-based modeling, computational game theory, and System Dynamics) provide a way to construct valid, coherent, and executable characterizations of Agile software development. These techniques can help answer key questions about Agile concepts and Agile application. BModSim complements data-analytic approaches, such as machine learning, by describing the larger landscape of organizations' application of Agile, putting in context diverse results from data analysis, and identifying the most profitable areas for future data collection. This blog post describes a System Dynamics simulation model that allows decision makers to compare software development practices while adjusting key model parameters, such as the size of the system requirements baseline, the number of increments in development, and staffing levels.

Why Does BModSim Matter for Agile?

Government programs adopting Agile practices and tailoring them for their applications face several challenges. As variants of Agile development approaches have proliferated, programs need guidance and evidence-based criteria to decide which variant to choose. Government systems are typically larger than the systems for which Agile practices were historically applied. Programs are therefore challenged to refactor government application systems for incremental and iterative development, as well as to scale the assignment of personnel beyond the small teams typical in Agile projects. Moreover, experiments to try out new ideas are hard to conduct in government settings.

Analysis conducted using BModSim can help to mitigate these challenges for government programs. BModSim can help to expose the operation of underlying mechanisms that make Agile useful in government programs. Before--or in the absence of--experimentation, BModSim can help decision makers understand exactly how and when different Agile approaches create value by analyzing the potential cost, schedule, and quality implications of different approaches. Finally, BModSim can help to test the efficacy of software-development policy and process through simulation, leading to refinements that increase the likelihood of success before implementation.

The model described in this blog post can be used to provide a rigorous foundation to explore, refine, and validate government applications of Agile. With further refinement, the model has the potential to serve as a useful decision aid for policy makers and system developers. Incremental and iterative development, a core aspect of Agile development, allows users to experiment with early working versions of the software in a way that promotes constructive feedback to the developers in terms of new or refined requirements. This blog post also shows how the model validates the assertion that incremental development accommodates requirements growth with less disruption to schedule than traditional development and acquisition practices. In particular, incremental development using Agile can reduce the impact of late requirements additions by introducing them into the normal iteration-planning process as they arise.

The System Dynamics Simulation Model

The SEI has been active for many years in efforts to use data within the DoD to inform better software development and better acquisition. We use causal modeling as a basis for developing simulation models that could be decision aids for acquisition managers to enable them to apply the insights that data reveal. Causal modeling looks beyond correlations to establish causal relationships between variables.

System Dynamics models are causal in structure. We use a System Dynamics model to characterize software-intensive-system acquisition processes. Our model includes management processes of government project management offices (PMOs), as well as contractors' software-development processes. The simulation allows us to evaluate these processes with the goal of improving performance.

When late requirements are rejected or delayed, the decision is often driven by the need to keep on schedule. But there are pressures to implement late requirements up front, especially in joint programs where there is high motivation to honor requirements of a diverse set of stakeholders. Denying late requirements can cause problems with community buy in.

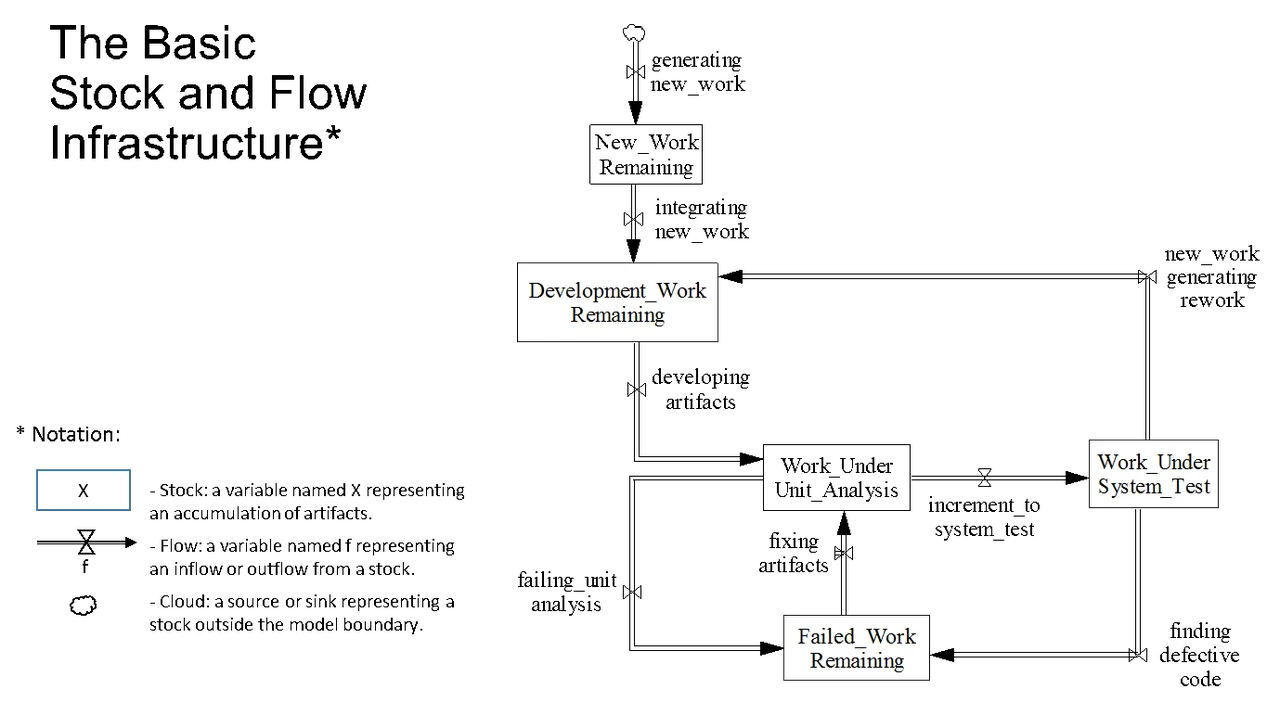

Figure 1: Stock and Flow Infrastructure

Figure 1 shows the basic stock-and-flow structure of the model associated with the development process. Starting at the Development_Work_Remaining stock, new work associated with late requirements may add to the remaining work. As artifacts are developed, they undergo unit analysis, such as unit test or static code analysis. Artifacts that fail unit analysis go to the Failed_Work_Remaining stock and are eventually fixed. Ultimately, artifacts are passed for system test, which may either get sent back into the development process when fixes are needed or reworked when new work requires changes to previously developed artifacts.

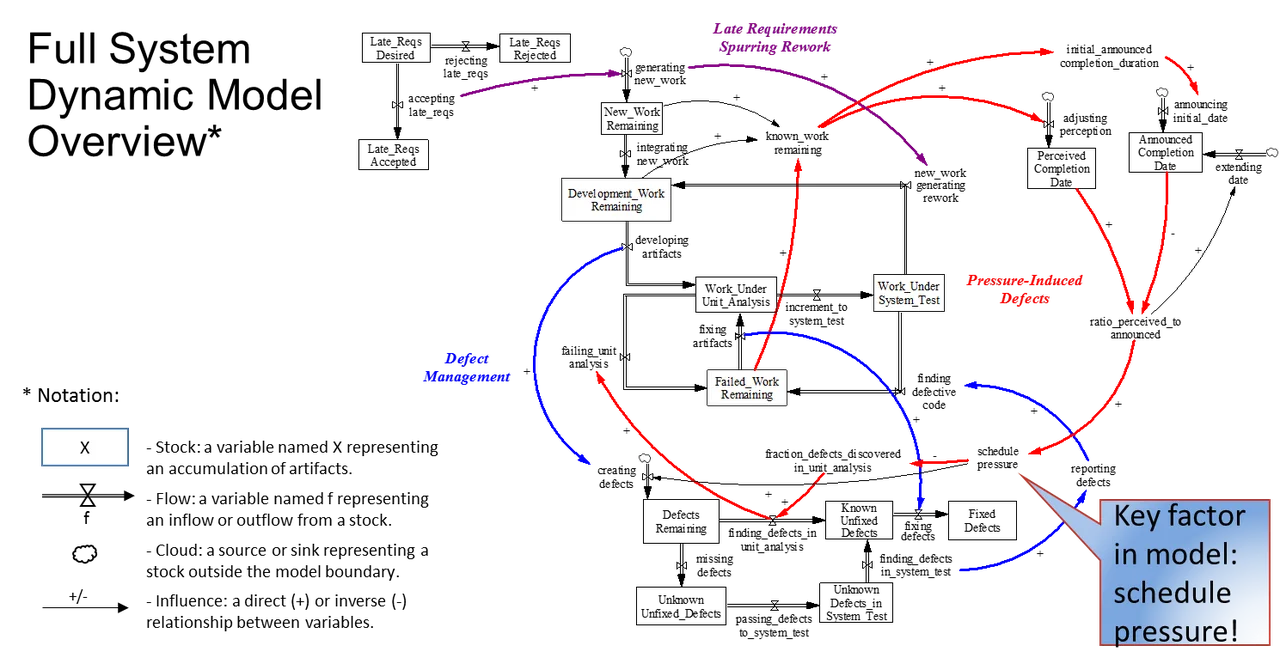

Figure 2: Full System Dynamic Model Overview

A more fully instantiated model is shown in Figure 2. The development process stock and flows are in the middle, with additional stock and flows for late requirements processing in the upper left and for tracking defects in the bottom middle of the figure. Late requirements that are accepted generate new work and may eventually spur rework, as shown in purple. The key dynamic is shown in red when the perceived completion date diverges from the announced date, creating schedule pressure. Schedule pressure induces quality-assurance shortcuts that can create more defects, while finding fewer defects as part of less rigorous unit analysis. These shortcuts speed up development in the near term, but create even more schedule pressure as those defects are eventually detected, possibly much further down the lifecycle when they are harder to fix. This dynamic is a self-reinforcing feedback loop called Pressure-Induced Defects in the figure.

Executing the Model

The execution of the System Dynamics model explores the benefits of one aspect of Agile (i.e., incremental development) over monolithic system development and finds ways of magnifying these benefits by fine tuning the application of Agile practices. The parameters for this exploration are

- size of baseline

- late requirements level

- number of increments

- test rigor

- realism of schedule setting

- staffing levels

The basic run of the model assumes 1,100 requirements developed by 15.5 full-time equivalent developers, resulting in 110K equivalent source lines of code (ESLOC). Defects are introduced as a result of schedule pressure and its associated quality-assurance process shortcuts. Monolithic development is granted up to 2 schedule extensions every 24 months as needed, though these are also parameters that can be changed in the simulation. Incremental development delays late-requirements introduction to the next increment and develops a new schedule from there. Defect repair time is generated from a log normal distribution with an average of 2.2 days for one person to repair a defect.

The above values used to calibrate the model were derived from two data sources:

- SEI Department of Defense Software Factbook, published in July, 2017

- industry metrics established in research at the SEI on Team Software Process (TSP)

Using multiple sources of data is often necessary to get a complete picture of what is going on in practice, but differing assumptions and target of those sources makes it challenging to unify that picture. Our calibrated model merged these two sources to represent a development profile somewhere between the average DoD large-project developer from the DoD Software Factbook and the higher-than-average performer using TSP.

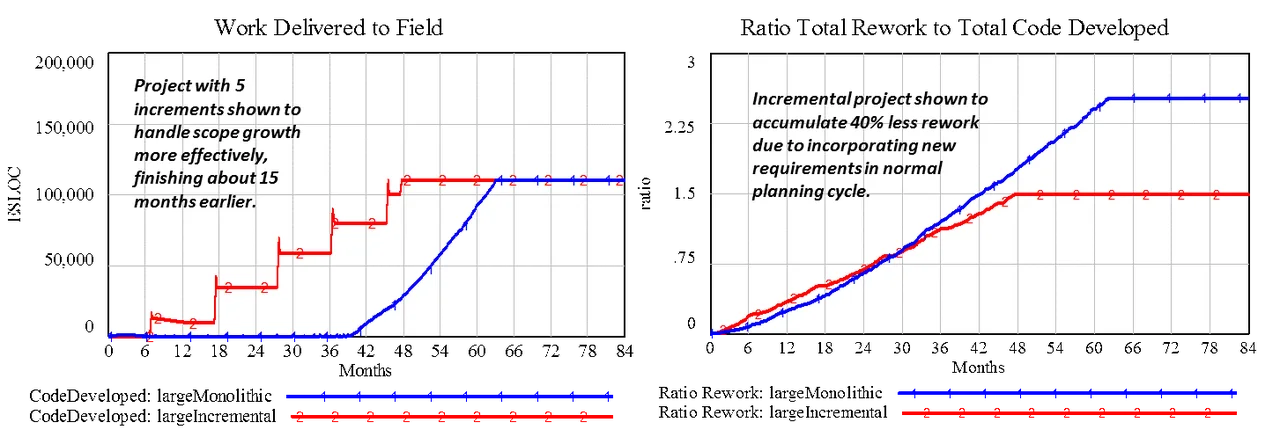

Figure 3 shows the result of the simulation comparing the effect of introducing additional late requirements continuously through the monolithic large-development project vs. introduction of those requirements in the next increment-planning cycle for each of five increments. The simulation occurs over 84 months (7 years) with the monolithic development shown in blue (simulation run 1) and the Incremental development shown in red (simulation run 2).

Figure 3: Comparison of Large Monolithic and Incremental Development

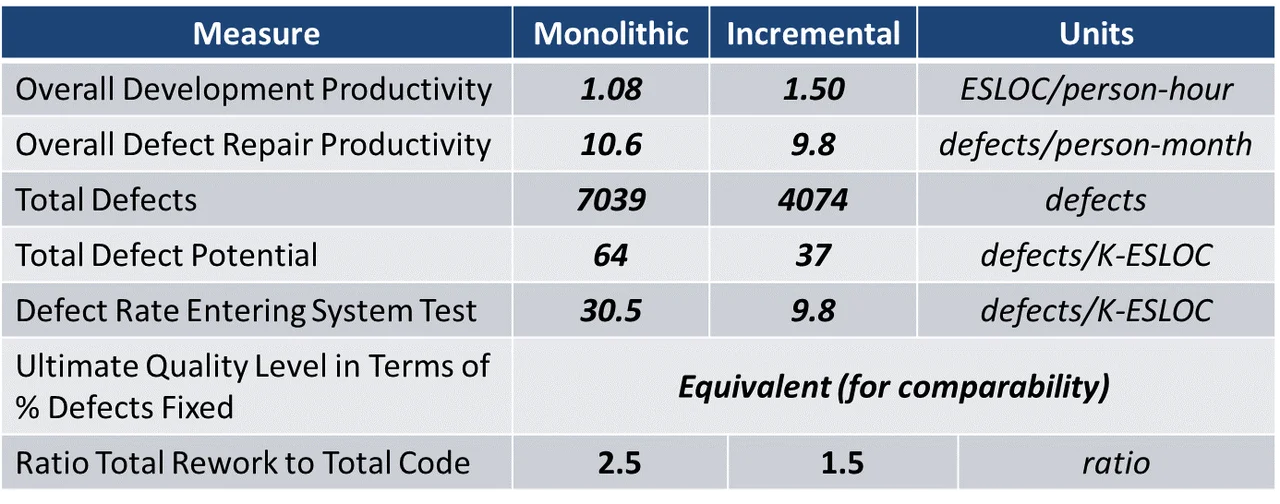

Introducing additional requirements late into the system-acquisition process can cause schedule growth beyond that due to the requirements themselves. Preliminary observations from the model support the conclusion that incremental development accommodates requirements growth with less disruption to schedule compared to monolithic system development. The incremental development finished 15 months earlier than monolithic development, primarily due to 40% less accumulation of rework. The primary reason for this improvement is reduced schedule pressure (and process shortcuts); introducing late requirements into the next iteration-planning cycle reduces the accumulation or rework by 40%. Table 1 below elaborates the defect-generation and repair profiles for both monolithic and incremental development in the model.

Table 1: Defect Profile of the Large Monolithic and Incremental Development Simulation

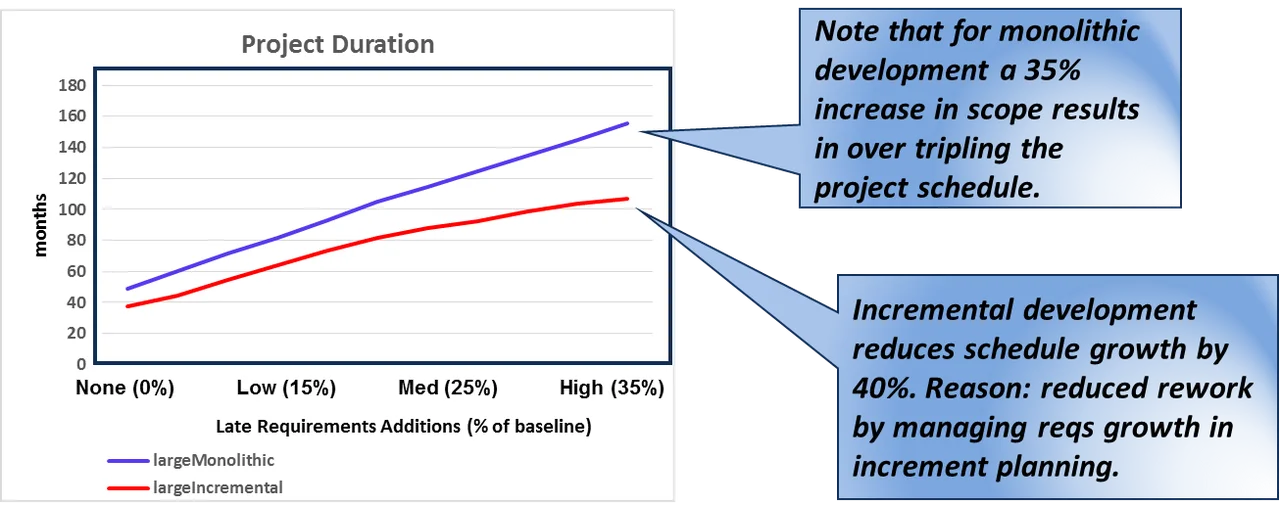

The above analysis raises the question of how well the two development approaches would deal with different levels of late requirements introduction. The advantage of BModSIm is that we can use tools to explore this question. The figure below shows that for monolithic system development, the schedule triples when late requirements reaches 35% of the initial baseline scope. Incremental development reduces this schedule growth by 40%.

Figure 4: Incremental Development Tolerates Scope Growth Better

Looking Ahead

Our application of System Dynamics to Agile software development is a work in progress, but showcases the potential value of BModSim approaches to answer key questions about Agile applications especially in contexts that expand on its traditional applications. Potential future work includes further calibration of the existing model based on internal measures of defect rates and fix productivity, exploration of the limits of Agile in addition to other possible benefits, and extension of the model and simulation to support decision making for Agile project managers and policy makers.

More work is needed in model refinement, validation, and calibration to shape this tool into a true decision aid. We welcome inquiries from volunteers who are interested in serving as data suppliers and/or subject-matter experts in our ongoing work. If interested, please contact us at info@sei.cmu.edu.

Additional Resources

Read the SEI blog post How to Identify Key Causal Factors That Influence Software Costs: A Case Study by Bill Nichols.

Read the SEI blog post Why Does Software Cost So Much? by Robert Stoddard

PUBLISHED IN

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed