Evolutionary Improvements of Quality Attributes: Performance in Practice

PUBLISHED IN

AgileContinuous delivery practices, popularized in Jez Humble's 2010 book Continuous Delivery, enable rapid and reliable software system deployment by emphasizing the need for automated testing and building, as well as closer cooperation between developers and delivery teams. As part of the Carnegie Mellon University Software Engineering Institute's (SEI) focus on Agile software development, we have been researching ways to incorporate quality attributes into the short iterations common to Agile development.

We know from existing SEI work on Attribute-Driven Design, Quality Attribute Workshops, and the Architecture Tradeoff Analysis Method that a focus on quality attributes prevents costly rework. Such a long-term perspective, however, can be hard to maintain in a high-tempo, Agile delivery model, which is why the SEI continues to recommend an architecture-centric engineering approach, regardless of the software methodology chosen. As part of our work in value-driven incremental delivery, we conducted exploratory interviews with teams in these high-tempo environments to characterize how they managed architectural quality attribute requirements (QARs). These requirements--such as performance, security, and availability--have a profound impact on system architecture and design, yet are often hard to divide, or slice, into the iteration-sized user stories common to iterative and incremental development. This difficulty typically exists because some attributes, such as performance, touch multiple parts of the system. This blog post summarizes the results of our research on slicing (refining) performance in two production software systems. We also examined the ratcheting(periodic increase of a specific response measure) of scenario components to allocate QAR work.

Slicing and Allocation in Agile Projects

QARs are often provided in unstructured and unclear ways. QAR refinement is the process of elaborating and decomposing a QAR into a specifiable, unambiguous form achievable in one release and finding suitable design fragments. This process, also known as slicing or sizing, is typically applied to user stories, short, textual requirements popularized by agile methodologies. The requirements community calls such iterative refinement the Twin Peaks model because you iteratively cross between the 'peaks' of requirements (the problem domain) and architecture (the solution domain).

QAR refinement should produce a unit of work small enough to test, small enough to fit in an iteration, and useful enough to produce value. It should separate abstract requirements into constituent parts so they and their interrelationship can be studied. "[QAR refinement] is a design activity," a software developer recently posted on Twitter. "It surfaces our ignorance of the problem domain."

There are a number of ways to size requirements. Some methods are based purely in the problem space, some are based on the work involved in satisfying the requirement, and some are a mixture of analyzing the problem and possible solutions. These approaches include

- And/Or decomposition: Split on conjunctions and and or in the requirement. For example, for the requirement "display user name and account balance," one piece would deliver the user name, the other piece the account balance.

- Acceptance or test criteria: Satisfy one criterion per slice. User stories (an Agile form of requirement with user-focused textual descriptions) often have a list of reasons for accepting the requirement (story) as done. For instance, "login function works, password validated." Each criterion is a slice.

- Spike: Add exploratory spikes to backlog where steps are unknown--investigate versus implement. Spikes are specific work dedicated to learning, such as the impact of a new version of a key framework.

- Horizontal slices: Slice according to architectural layers (such as database, UI, business logic). Commonly seen as an anti-pattern since it creates false divisions of the work.

- Operation type: Slice according to database operations. One story for create operations, one for read, etc.

- Hamburger slicing: Create horizontal slices that map steps in a use case, then extend vertically according to improved quality criteria. For example, a user login use case has steps for validating passwords, displaying user history, etc. These are then expanded 'vertically' for different options, such as login options OAuth, social media account, or simple user:password.

In the two examples described below, we found the most common slicing approach was ratcheting, popularized by Martin Fowler in his blog post "Threshold Testing."

Our Approach

Our research question was

How do projects slice quality attribute requirements into iteration-sized pieces, and how are these pieces allocated to a project plan or sprint backlog?

QARs, including performance, security, and modifiability, significantly influence architecture. We conducted case studies of production systems (we omit identifying details to protect confidentiality). For each case, we conducted interviews with team leads and architects to understand the teams' deployability goals and architectural decisions they made to enable deployability. We then performed semi-structured coding to elicit common aspects across the cases.

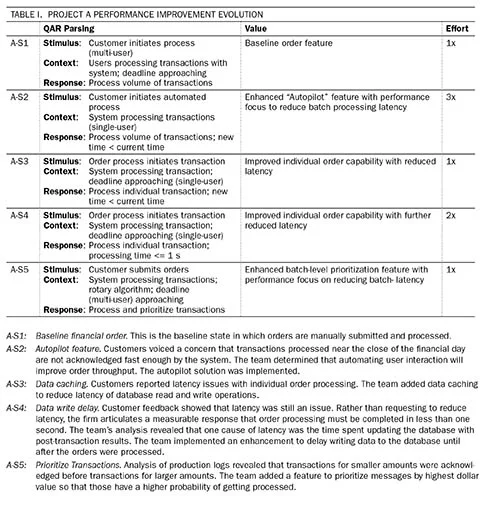

Based on our analysis, we described the quality attribute work as a series of increments, representing the current state of the QAR, as the requirement is progressively refined and enhanced. Table 1 below shows our conceptual framework. We used a scenario to define each state, with each scenario consisting of a stimulus, some context or background, and a response to that stimulus. Our approach is analogous to an Agile, "Behavior-Driven Development" (BDD)-style "Given <>, When <>, Then <>" model. The value column reports the outcome of that increment. Based on our interviews, refinement happens not only in the stimulus response, but also the context and the stimulus itself. In other words, one can tighten the response (from 10 to 1 ms, for example); one can loosen the context (for all customers instead of just certain customers); or one can expand the range of stimuli (any order versus special order types). We believe refinement of QARs using scenarios creates a reasonably sized chunk of work for analysis, implementation, and possibly even testing during an iteration.

Project A

Project A developed financial support software for a mid-size firm. The software supported the buying and selling of financial securities. Performance was a key concern, particularly at the close of the financial day. Customers may have to pay interest if they have to borrow large sums of money to hold sell orders overnight if they were not processed by the close of trading. Development cycles were deliver-on-demand, rather than fixed iterations, while customers provided feedback to the developers that informed the work in the subsequent state. Table 1 shows a summary of the state transitions. Below, we describe states that we identified in the first project:

These states were refined in three different dimensions (stimulus, context, response).

- Stimulus: A-S4 to A-S5 illustrates refinement in the stimulus dimension, moving from a single- to multi-user perspective. A second example is A-S2 to A-S3, which illustrates moving from batch processing to individual transaction processing.

- Context: The initial requirement focused development on the system behavior (simple baseline case) to test ideas before dealing with complexities and uncertainties of the environment. A-S1 to A-S2 illustrates refinement in the context dimension, moving from manual processing to the autopilot solution, by adjusting the boundary of the system with respect to its environment, including the user's role. Moving from A-S4 to A-S5 accounts for the complexities of the rotary algorithm in the environment.

- Response: A-S3 to A-S4 illustrates refinement in the response dimension by ratcheting the response measure. The response measure value was refined to less than 1 second for processing individual transactions.

This analysis demonstrates that each refinement we captured reflects a change in the evolving requirements context, such as increasing number of orders to be processed or reducing performance threshold for a single order. The team also must make tradeoffs in the measurement criteria, for example, first optimizing for throughput (A-S2) before dealing with latency (A-S3, A-S5). With three dimensions to adjust (stimulus, context, response), ratcheting in one dimension may require easing up on another to make progress.

Project A explained that separating performance-related from feature-related requirements in the backlog or when planning and allocating work to iterations was not useful. Project A followed evolutionary incremental development by doing performance-related analysis work concurrently and loosely coupled from implementation sprint work. Separating feature work from performance work is a common pattern that we observe in industry. As analysis work was completed, the work was allocated to sprints. Well-understood changes refining existing features, such as A-S1 through A-S3, were allocated to implementation sprints with minimal analysis. However, in cases where significant analysis was needed (e.g., A-S4), the team created an exploratory prototype to learn more about the problem and investigate alternative solutions while continuing to mature the system and implement ongoing requirements. For A-S4 the changes were more substantial, so the work was allocated to multiple sprints.

Project B

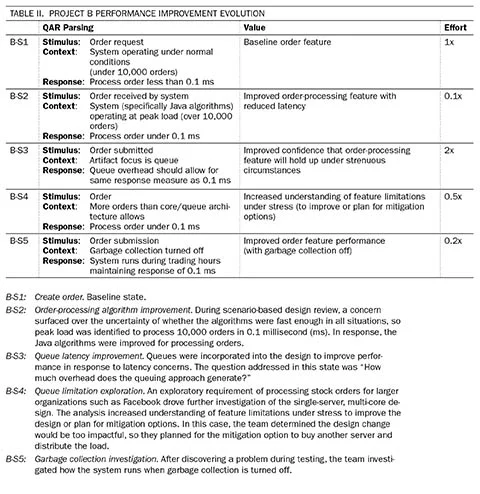

Project B, described in Table II, was also a financial system with stringent performance requirements. The system functionality included high-speed, stock order processing. Like Project A, the performance iterations are described as state transitions. This project was in the pre-release phase, focusing on evaluating the design to ensure it could meet requirements. The team used a scenario-driven approach to investigate performance risks. Output of scenario analysis is input to the evolving system design and architecture of the next state, as described below.

The analysis of this example also shows changes to the stimulus, context, and response, as summarized below.

- Stimulus: BS-3 shows variations in the stimulus with the artifact changing to focus on a specific part of the system, the queue.

- Context: B-S2 shows variations in the context by increasing the concurrently processed orders to 1,000 orders while maintaining response time at 0.1 ms.

- Response: The response goal remained consistent at 0.1 ms throughout all the state transitions. In interviews, however, we discovered that this target was reached over several rounds of tweaking the design, analyzing the response under increasingly stringent conditions, such as described in B-S2, and making incremental improvements.

Project B did not separate feature-related and performance-related requirements in their software development lifecycle either. Like Project A, the team conducted exploratory performance analysis and design work concurrently with maturing other features and continuing implementation. Work was integrated into implementation sprints as it became better defined through analysis of design artifacts, prototyping, or both.

Conclusions

Ultimately, the purpose of refining QARs is to decompose a stakeholder need or business goal into iteration-sized pieces. The process of allocation then takes those pieces and determines when to work on them. This activity involves both analysis and design: refinement and allocation are explorations of the problem and solution spaces, and evolutionary, iterative development allows for course changes when new information is acquired. Developers work toward satisfying cross-cutting concerns in the context of the effort and ultimate value.

We observed that developers refined performance requirements using a feedback-driven approach, which allowed them to parse the evolving performance requirement to meet increasing user expectations over time (expressed as state transitions). Within each state transition, developers refined cross-cutting concerns into requirements by breaking them into their constituent parts in terms of the scope of the system and response to stimuli in a given context. The system and cross-cutting performance requirements evolve as stimuli, context, and response are ratcheted.

We see evidence of projects that are better able to sustain their development cadence with a combination of refinement and allocation techniques guided by measures for requirement satisfaction, value, and development effort. As we retrospectively analyzed these examples, we found that these teams did not follow a formal technique; however, they did have common elements in how they refined the work into smaller chunks, enabling incremental requirements analysis and allocation of work into implementation increments.

Martin Fowler describes ratcheting as a performance requirement in terms of increasing a specific response measure. Based on what we learned from our interviews, we suggest that this ratcheting concept can be broadened. For example, changes in the evolving context, such as increasing the number of orders to be processed or reducing the performance threshold for a single order, allow for breaking a cross-cutting concern to a reasonably sized chunk of work for analysis, allocation, or testing. We suggest that these examples, which demonstrate ratcheting in multiple dimensions, could be useful for teams struggling with how to break up and evolve cross-cutting concerns during iterative and incremental development.

Additional Resources

This blog post is a summarization of a paper that was presented at the International Conference on Software Maintenance and Evolution.

PUBLISHED IN

AgileGet updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed