DevOps and Docker

Docker is quite the buzz in the DevOps community these days, and for good reason. Docker containers provide the tools to develop and deploy software applications in a controlled, isolated, flexible, highly portable infrastructure. Docker offers substantial benefits to scalability, resource efficiency, and resiliency, as we'll demonstrate in this posting and upcoming postings in the DevOps blog.

Linux container technology (LXC), which provides the foundation that Docker is built upon, is not a new idea. LXC has been in the Linux kernel since version 2.6.24, when Control Groups (or cgroups) were officially integrated. Cgroups were actually being used by Google as early as 2006, since Google has always been looking for ways to isolate resources running on shared hardware. In fact, Google acknowledges firing up over 2 billion containers a week and has released its own version of LXC containers called lmctfy, or "Let Me Contain That For You."

Unfortunately, none of this technology has been easy to adopt until Docker came along and simplified container technology, making it easier to utilize. Before Docker, developers had a hard time accessing, implementing, or even understanding LXC let alone its advantages over hypervisors. DotCloud founder and current Docker chief technology officer Solomon Hykes was on to something really big when he began the Docker project and released it to the world as open source in March 2013. Docker's ease of use is due to its high-level API and documentation, which enabled the DevOps community to dive in full force and create tutorials, official containerized applications, and many additional technologies. By lowering the barrier to entry for container technology, Docker has changed the way developers share, test, and deploy applications.

How can Docker help us in DevOps? Well, developers can now package up all the runtimes and libraries necessary to develop, test, and execute an application in an efficient, standardized way and be assured that it will deploy successfully in any environment that supports Docker.

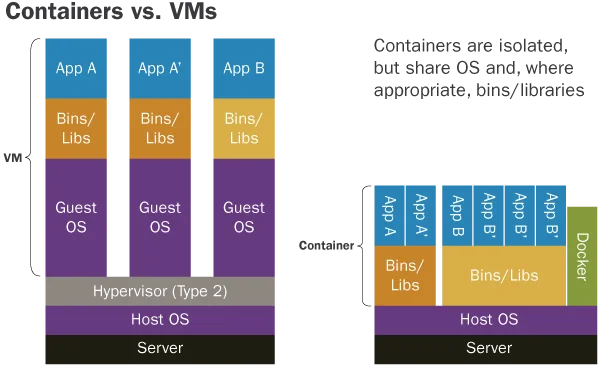

Initial reactions to container technology often compare containers to small virtual machines. However, the advantages of containers over VMs becomes apparent with regards to performance. In particular, a Dockerized application starts quickly, without the need to perform all of the steps associated with starting a full operating system. These containers share the operating system kernel, and other binaries and libraries where appropriate. Below is an image from the Docker website that highlights the differences. In particular, note how containers incur much less time and space overhead than virtual machines.

Docker vs Virtual Machine

Another great feature is the built-in versioning that Docker provides. This "git-like" versioning system can track changes made to a container, and both public and private repositories (if your organization desires or requires) can be used to store the versioned containers.

Docker had a big impact in 2014, and in 2015 you can expect even greater adoption by both small and large companies. This uptake is evident since Docker support has quickly been adopted by major cloud services, such as Amazon Web Services and Microsoft Azure.

We expect Docker to play a key role in future conversations that focus on designing, building, and deploying applications, especially with the guarantee that an application will run in a production, or customer environment, just as it did during development and testing. A few weaknesses become evident when it comes to communication between Docker containers running on different servers, but this will only improve with time. You can also expect some competition around the corner in 2015. If you haven't tried Docker yet, definitely give it a try. This technology is really just beginning to fire on all cylinders and so much more is to come.

Every two weeks, the SEI will publish a new blog post that will offer guidelines and practical advice to organizations seeking to adopt DevOps in practice. We welcome your feedback on this series, as well as suggestions for future content. Please leave feedback in the comments section below.

Additional Resources

To listen to the podcast,DevOps--Transform Development and Operations for Fast, Secure Deployments, featuring Gene Kim and Julia Allen, please visit https://resources.sei.cmu.edu/library/asset-view.cfm?assetid=58525.

To read all the installments in our weekly DevOps series, please click here.

Published in the series thus far:

- An Introduction to DevOps

- A Generalized Model for Automated DevOps

- A New Weekly Blog Series to Help Organizations Adopt & Implement DevOps

- DevOps Enhances Software Quality

- DevOps and Agile

- What is DevOps?

- Security in Continuous Integration

- DevOps Technologies: Vagrant

- DevOps and Your Organization: Where to Begin

More By The Author

PUBLISHED IN

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed