Is Your Organization Using Cybersecurity Analysis Effectively?

PUBLISHED IN

Situational AwarenessCybersecurity analysis techniques and practices are key components of maintaining situational awareness (SA) for cybersecurity. In this blog post in our series on cyber SA in the enterprise, I define the term analysis, describe what constitutes a security problem that analysts seek to identify, and survey analysis methods. Organizations and analysts can ensure that they are covering the full range of analytical methods by reviewing the matrix of six methods that I present below (see Table 1).

Analysis is the process of using data, context, analytical techniques, and critical thinking skills to synthesize data, answer questions, or test hypotheses and make the results available and usable to help people make effective decisions. The SEI-developed cyber intelligence framework defines the components of analysis:

- analytical acumen--the skills and knowledge of an analyst

- environmental context--the circumstances that influence events and allow interpretation of data

- data gathering--acquisition of relevant details

- microanalysis--assessment of the what and how of a problem under review

- macroanalysis--assessment of the who and why of a problem under review

- reporting and feedback--presentation of information and potential courses of action to help decision makers make better decisions

This framework helps analysts to think about what they are trying to accomplish and how. It illustrates how broad an analysis task can be, beyond microanalysis or macroanalysis.

Successful analysis requires analysts that have the skills to

- identify the questions to answer or problems to solve

- understand how the environmental context influences the data gathered and the interpretation of the analytic results

- account for assumptions and cognitive biases

- communicate findings effectively and recognize where there may be alternative interpretations of results

For more information on the process of analysis, we recommend Psychology of Intelligence Analysis by Richards J. Heuer, Jr., and the SEI workshop presentation, Thinking Like an Analyst.

What Constitutes a Cybersecurity Problem?

Although definitions vary among different organizations and in different contexts, threats, attacks, compromises, and vulnerabilities are the most commonly thought-of cybersecurity problems. Threats, attacks, and compromises occur across a continuum of potential, active, and successful. To prevent--or at least mitigate or minimize--loss due to security incidents, organizations must identify threats, attacks, and compromises as soon as possible, while they still fall in the category of "potential" rather than active or successful.

Analysts must have sufficient resources, including an understanding of what they need to detect. The absence of this understanding is a surprisingly common knowledge gap for security analysts. Many organizations do not have robust and accessible documentation of their assets, policies, business processes, and priorities. Too often, analysts are told to "find the bad stuff" without any definition of what that stuff is or just a generic definition, such as malware, exfiltration, or account compromise.

In our introductory post in this series, we identified the components of situational awareness:

- Know what should be.

- Track what is.

- Infer when should be and is do not match.

- Do something about the differences.

When analysts do not have comprehensive knowledge concerning what should be to compare with what is, they succeed only in finding the most visible and easiest-to-fix problems. Finding such problems is important in overall risk management, since no one is happy when a security problem occurs and causes loss that could easily have been prevented or quickly mitigated. However, a focus on the visible, easy-to-fix problems in an organization with poor security controls can quickly consume the security analysts' time. Such a focus could prevent them from getting ahead of the problems, or worse, prevent them from catching up with the volume of problems that occur. Moreover, the visible, easy-to-fix problems are seldom those that target the critical business processes of the organization or that could cause the most harm.

Detecting incidents should not be an organization's sole focus for security problems. It is the vulnerabilities in assets or systems that make security incidents possible. While vulnerabilities often result from something being wrong with technology--commonly referred to as a "bug"--this is not the case with all vulnerabilities. Bugs are the simplest vulnerabilities to control for since the analyst can quickly implement patches and apply defense-in-depth strategies, and greatly mitigate (if not completely eliminate) the possibility of those bugs being exploited. Other vulnerabilities are harder to protect from exploitation, especially those rooted in the human propensity to succumb to social engineering.

All the components of situational awareness (i.e., know what should be, etc.) must be provided with capable and empowered staff who take responsibility for the associated tasks, funding for appropriate tools, and sufficient staff hours to adequately address the needs of each component. For more information about how organizations can assess and improve their ability to manage cybersecurity problems, see the SEI technical report, Incident Management Capability Assessment.

Analytical Methods

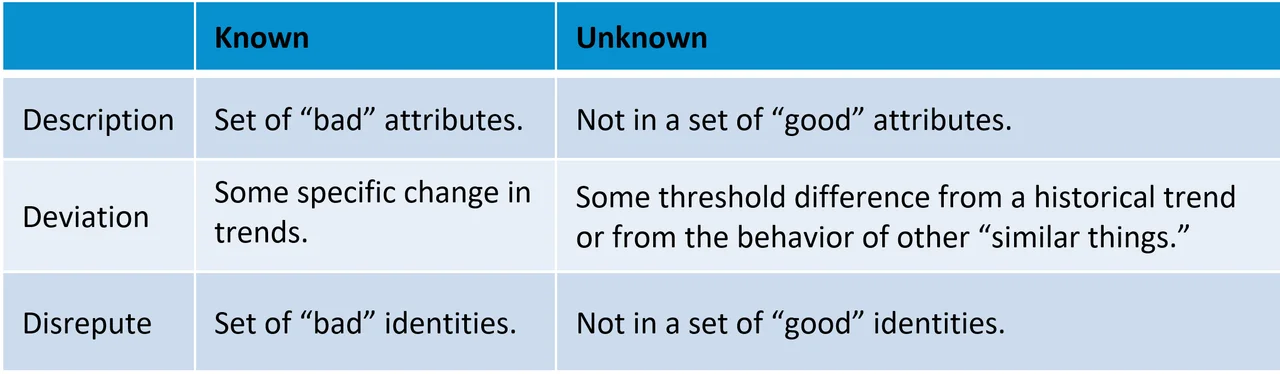

There are a number of common techniques that come up in discussions about detecting security problems, including signatures, anomalies, reputation, blocklists, indicators, knowns, unknowns, and deviations. Each technique may have a slightly different connotation depending on the person talking, and their definitions often overlap. To minimize possible confusion around these terms, we identify six categories of analytical methods for security-problem detection, which we represent as a matrix. We have three rows for types of analytics and two columns for whether we are creating the analytics based on specific information we want to find (known) or creating the analytics based on information that does not match the way we expect things to be (unknown).

Known description analytics are those that look for items that have a set of attributes that raise concerns. A simple example of such an analytic would inspect TCP packet headers to find any that have all of the TCP flags set, indicating a Christmas tree scan. A more complex example could be a machine-learning analytic that uses supervised learning to identify the attributes most likely to indicate a phishing email based on a corpus of known phishing and non-phishing emails.

Unknown description analytics look for items that have attributes that do not match expected attributes, such as protocol standards or authentication policies. A simple analytic example of this type is looking at network traffic for any IP addresses contacting a Domain Name System (DNS) server over a non-standard DNS port.

Known deviations look for specific changes in behavior or metrics. An example would be an analytic to detect participation in Network Time Protocol (NTP) distributed denial-of-service (DDoS) attacks where specific configurations of NTP servers are exploited to amplify the network traffic volume by ensuring large response flows: the ratio of outgoing response volume to incoming request volume exceeds 555:1. Another example is an analytic that looks for endpoint devices that change from data consumers to data producers.

Unknown deviations look for differences from a historical trend or from the behavior of other similar things that exceed some threshold. A simple analytic would be one that looks for devices with an outgoing byte volume that at least quadrupled from what it had been the previous week. A more involved analytic could use an unsupervised machine-learning technique to cluster items and look for low density clusters or items that don't fit in any of the clusters.

Known disrepute analytics are what have commonly been called blacklists. These blocklist analytics use various search methods to detect specific IP addresses, domains, file names, processes, or hashes--entity identifiers. In the simplest case, these analytics do direct matching. In more complicated instances they do fuzzy matching.

Unknown disrepute analytics have been commonly called whitelists. These permit-only type analytics detect entity identifiers that are not in a relatively small set of things that are known to be safe.

Each of these analytics can provide value to security practitioners, but which are most useful will depend on the analyst's role and goal. Known descriptions and the two disrepute methods are the most common methods for generating security alerts. They are also those that analysts usually view as most accurate, in part because they are more likely to directly assess when know what should be and track what is do not match.

The other three methods are more likely used for hunting activities. In particular, they are not direct comparisons of know what should be and track what is, but are methods for inference. When they are used to generate alerts, they tend to alert on many items that are not security problems and require tuning, often on an ongoing basis.

Wrapping Up and Looking Ahead

All the methods described in this post are most effective when they are developed using good analysis techniques:

- Have a clear, specific goal and a knowledge of how to evaluate whether the goal is achieved.

- Choose an appropriate analytical method and analysis tools to meet the goal and work with the available data.

- Incorporate environmental context into both the analysis and interpretation. This is possible only when analysts have a good understanding of the know what should be aspect of situational awareness.

- Utilize the data that are most relevant to the goal and contain characteristics that adequately distinguish the items of interest, which may require integrating multiple data sources.

- Communicate results effectively to the appropriate parties--asset owners, managers, incident responders, or fellow analysts through ticket documentation.

Ideally, organizations will have both the processes and architecture in place to provide their analysts with comprehensive situational awareness, which is a prerequisite for applying these techniques.

In our next post in this series on cyber situational awareness in the enterprise, we will discuss situational awareness and the security operations center (SOC).

Additional Resources

Read Psychology of Intelligence Analysis by Richards J. Heuer, Jr.

View the SEI workshop presentation, Thinking Like an Analyst.

Read the SEI technical report, Incident Management Capability Assessment.

Read the first blog post in this series on network situational awareness, Situational Awareness for Cybersecurity: An Introduction.

Read the second post in this series, Situational Awareness for Cybersecurity: Assets and Risk.

Read the third post in this series, Situational Awareness for Cyber Security: Three Key Principles of Effective Policies and Controls.

Read the fourth post in this series, Engineering for Cyber Situational Awareness: Endpoint Visibility.

Read the fifth post in this series, Situational Awareness for Cybersecurity Architecture: Network Visibility.

Read the sixth post in this series, Situational Awareness for Cyber Security Architecture: Tools for Monitoring and Response.

Read the seventh post in this series, Situational Awareness for Cybersecurity Architecture: 5 Recommendations.

More By The Author

More In Situational Awareness

PUBLISHED IN

Situational AwarenessGet updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedMore In Situational Awareness

Get updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed