Best Practices for Cloud Security

PUBLISHED IN

Cloud ComputingAs detailed in last week's post, SEI researchers recently identified a collection of vulnerabilities and risks faced by organizations moving data and applications to the cloud. In this blog post, we outline best practices that organizations should use to address the vulnerabilities and risks in moving applications and data to cloud services.

These practices are geared toward small and medium-sized organizations; however, all organizations, independent of size, can use these practices to improve the security of their cloud usage. It is important to note that these best practices are not complete and should be complemented with practices provided by cloud service providers, general best cybersecurity practices, regulatory compliance requirements, and practices defined by cloud trade associations, such as the Cloud Security Alliance.

As we stressed in our previous post, organizations must perform due diligence before moving data or applications to the cloud. Cloud service providers (CSPs) use a shared responsibility model for security. The CSP accepts responsibility for some aspects of security. Other aspects of security are shared between the CSP and the consumer or remain the sole responsibility of the consumer.

This post details four important practices, and specific actions, that organizations can use to feel secure in the cloud.

Perform Due Diligence

Cloud consumers must fully understand their networks and applications to determine how to provide functionality, resilience, and security for cloud-deployed applications and systems. Due diligence must be performed across the lifecycle of applications and systems being deployed to the cloud, including planning, development and deployment, operations, and decommissioning, as described below.

- Planning. The first step in a successful cloud deployment is selecting an appropriate system or application to move to, build in, or buy from a CSP--a challenging task for a first-time cloud deployment. Benefit from the experience of others and use a cloud adoption framework to enable efficient use of cloud services and consistent architectural designs. A framework provides a governing process for identifying applications, selecting cloud providers, and managing the ongoing operational tasks associated with public cloud services. Cloud adoption frameworks may be CSP-specific or CSP-agnostic.

Having used a cloud adoption framework to identify both a target system and/or application for cloud deployment and a CSP, educate all staff involved in the deployment on the basics of the selected CSP, architecture, services, and tools available to assist in the deployment. Make sure everyone understands the CSP's shared responsibility model and its impact on their role in the cloud deployment.

- Development and Deployment. The system or application development and deployment team should be trained in the details of correctly using CSP services to implement applications. CSPs provide guidance and documentation on best practices for using their services. If architects are developing a new application or system for the cloud, they should design and develop the system using the CSP's guidance. If migrating an existing application or system, review its architecture and implementation relative to the CSP's guidance--this may include talking to CSP technical support staff--to determine what changes will be needed to deploy the application appropriately.

Cloud computing is based on delivery of abstracted services that often closely resemble existing hardware, networks, and applications. It is critical for effective security, however, that consumers realize these are only abstractions carefully constructed to resemble information technology resources organizations currently use. Review the organization's security policies and current security control implementation approaches. Check the CSP's guidance before implementing the on-premises approach in the cloud. First, verify that the on-premises approach would be effective if implemented in the cloud. Then see if CSP services provide a better implementation approach that still meets security policy goals.

Moving to a cloud environment may present risks that were not present in the on-premises deployment of applications and systems. Check for new risks and identify any new security controls needed to mitigate these risks. Again, consider how CSP-provided control implementations can help. Likewise, use CSP-provided tools to check for proper and secure usage of services.

- Operation. Once developed and deployed, applications and systems must be operated securely. Unlike physical servers, disks, and networking devices, software defines the cloud virtual infrastructure. Consequently, the infrastructure can be treated as source code, which should be managed in a source code control system, with change control procedures enforced. Source code control systems have been proven effective in managing software development. These same practices can be adapted to manage cloud infrastructure. Changes to production resources should require independent approval prior to implementation by a system manager.

- Decommissioning. There are a variety of reasons that it may be necessary to decommission a cloud-deployed application or system--possibly quickly. For example, the CSP could go out of business or discontinue key services used by the application. CSP prices could increase, making the current deployment too expensive. Whatever the reason, planning for decommissioning a cloud application or system should be done before deployment. Cloud services are currently unique to each CSP, so moving an application or system from one CSP to another is likely to be a major effort. Consider what would be involved in leaving a CSP. The most important part of any application or system to the organization is the data stored and processed within. It is therefore critical to understand how the data can be extracted from one CSP and moved to another.

- Develop a multiple-CSP strategy. In this CIO.com article, several chief information officers (CIOs) provide perspectives on the need for a multi-CSP strategy. When making the initial CSP selection, consider how the selected application could be deployed to more than one CSP. Mappings among CSPs, readily available on the Web, can help identify how an application architected for one CSP might be moved to another. While the application or system will be deployed to only one of these CSPs, it is important to track aspects of the deployment during development that are unique to the chosen CSP and which would need to be redesigned if moved.

Managing Access

Access management generally requires three capabilities: the ability to identify and authenticate users, the ability to assign users access rights, and the ability to create and enforce access control policies for resources, as discussed below.

- Identify and Authenticate Users. Use multifactor authentication to reduce the risk of credential compromise. Stolen privileged user credentials allow an attacker to control and configure cloud consumer resources. Use of multiple factors requires an attacker to acquire multiple, independent authentication elements, reducing the likelihood of compromise.

- Assign User Access Rights. Plan a collection of roles to fill both shared and consumer-specific responsibilities. CSPs and others, such as Gartner, provide advice on designing roles. These roles should ensure that no one person can adversely affect the entire virtual data center.

Individual developers and system managers should not have uncontrolled access to resources. Limiting access can limit the impact of a credential compromise or a malicious insider. Developers should be constrained to assigned projects. System managers should be constrained to assigned resources. Role-based access control can be used to establish privileges for developers and system managers.

- Create and Enforce Resource Access Policies. CSPs offer several different types of storage services, such as virtual disks, blob storage, and content delivery services. Each of these services may have unique access policies that must be assigned to protect the data they store. Cloud consumers must understand and configure these service-specific access policies. .

Protect Data

Beyond access control, data protection involves three separate challenges: protecting data from unauthorized access, ensuring continued access to critical data in the event of errors and failures, and preventing the accidental disclosure of data that was supposedly deleted.

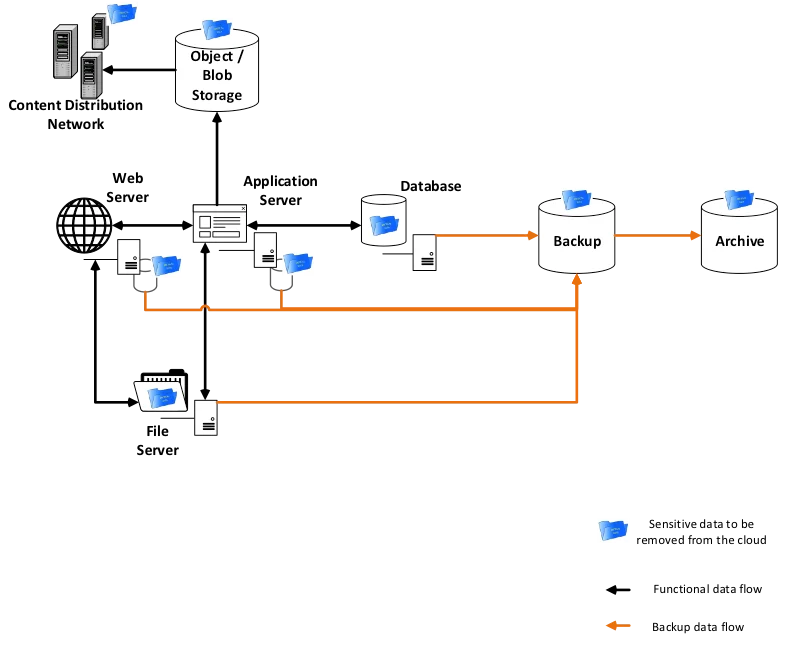

Sensitive Data in a Typical Cloud Web Application.

- Protect Data from Unauthorized Access. Encrypt data at rest to protect it from disclosure due to unauthorized access. CSPs typically provide encryption capabilities for the storage services they offer. Properly manage the associated encryption keys to ensure effective encryption. CSPs offer consumers a choice of CSP-managed or consumer-managed keys. CSP-managed keys are convenient, but provide the consumer no control over where or how the keys are stored. Consumer-managed keys place the burden of key management on the consumer but provide better control. CSPs offer hardware security modules (HSMs) in the cloud to assist in securely managing keys.

- Ensure Availability of Critical Data. CSPs provide significant guarantees against loss of persistent data. No system is perfect, however, and major cloud providers have accidently lost customer data. In addition to CSP errors, cloud consumer staff may also make mistakes that can result in data loss. You must ensure that CSP data backup and recovery processes meet your organization's needs. Your organization may need to augment CSP processes with additional back-up and recovery actions. CSPs may provide services that consumers can configure to perform additional backup and recovery operations.

- Prevent Disclosure of Deleted Data. CSPs often replicate data to ensure persistence. As shown in the figure above, during the course of system operation, sensitive data can find its way into logging and monitoring services, backups, content distribution services, and other places. When you need to delete sensitive data, or retire resources containing sensitive data, you must consider the replication and spread of data resulting from normal system operation. Analyze the cloud deployment thoroughly to understand both where sensitive data may have been copied or cached and determine what should be done to ensure these copies will be deleted.

Data is ultimately stored on media, such as magnetic or solid state disks. These media devices fail regularly and must be replaced. Even though the device itself has failed, consumer data still resides in the device. You should therefore understand how the CSP handles storage media removed from production.

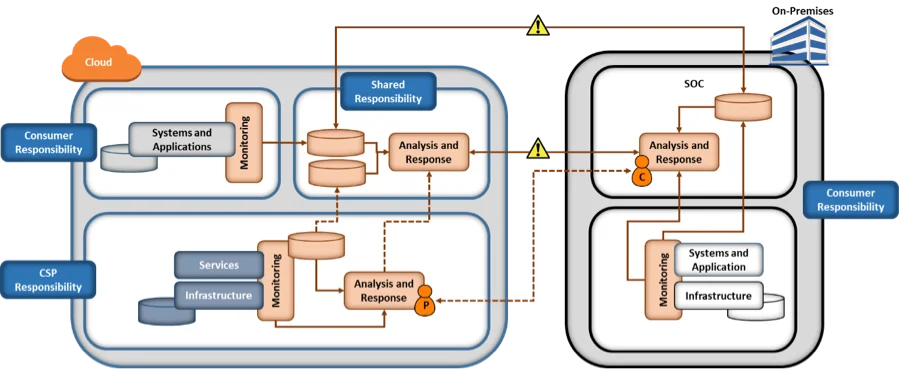

Monitor and Defend. The figure below depicts the CSP and consumer responsibilities for monitoring when some systems and applications are deployed to a CSP. Obviously, the cloud deployment adds complexity to monitoring, as discussed below.

- Monitor Cloud-Deployed Resources. The CSP is responsible for monitoring the infrastructure and services provided to consumers, but is not responsible for monitoring the systems and application consumers create using the provided services. The CSP provides monitoring information to the consumer that is related to the consumer's use of services. Rely on CSP-provided monitoring information as your first line of monitoring to detect unauthorized access to, or use of, systems and applications, as well as unexpected behavior or usage of the systems and applications or their users.

CSP-provided monitoring data is obviously different from the data collected in on-premises monitoring. You must therefore learn how to use the new data to defend your cloud-based resources. Understand the meaning of the CSP-provided data, determine what is normal for your cloud deployment, and use CSP-provided tools to detect anomalies. The SANS Reading Room contains a variety of short papers that address using CSP-provided data to effectively monitor cloud deployments.

To the extent possible, use CSP-provided monitoring data, but you may want to augment it with additional monitoring of your cloud-based resources. Be aware that monitoring approaches used on premises may not work in the cloud. For example, virtual routers do not provide the equivalent of span ports that can see all network traffic, complicating monitoring that is flow-based. Consumers will need to design and implement any additional monitoring carefully to ensure it is fully integrated with cloud automation (e.g., autoscaling in infrastructure as a service (IaaS)) and can be scaled up or down without manual intervention.

- Analyze Both Cloud and On-Premises Monitoring. With a hybrid cloud deployment that moves some resources to a CSP but retains many resources on premises, there is a need to combine CSP-provided monitoring information, consumer cloud-based monitoring information, and consumer on-premises monitoring information to create a complete picture of the organization's cybersecurity posture. The figure above shows a cloud-based monitoring and analysis enclave in which all three monitoring data sources are combined. While this enclave could be placed within the cloud or on premises, there may be advantages to a cloud deployment.

First, CSPs typically charge for data transfers into and out of their services. To encourage continued and potentially growing use of their services, CSPs often charge more for transfers out of the cloud than they do for transfers into the cloud. Depending on the volume of data involved, it may therefore simply be cheaper to move data from on-premises monitoring into the cloud than it is to move cloud-based monitoring data to an on premises enclave. Second, storage for large volumes of data may be cheaper in the cloud, especially storage for archived data being preserved but not actively used. Third, consumers can benefit from the inherent elasticity of the cloud, rapidly increasing analysis capacity when needed and decreasing capacity to save money when it is not needed.

- Coordinate with the CSP. The CSP is responsible for monitoring the infrastructure used to provide cloud services, including virtual machines, networks, and storage with IaaS, or entire applications with software as a service (SaaS). The figure above shows a dashed line between the CSP's security analyst (P) and the consumer's security analyst (C). The CSP may detect events that could adversely affect the consumer's applications. If so, the CSP will need to inform the consumer and coordinate a response. Similarly, the consumer may detect adverse events and need assistance investigating them.

As with all aspects of cloud computing, responding to security events is a shared responsibility. You need to learn how to collaborate with the CSP to investigate and respond to potential security incidents. To collaborate effectively, you need to understand what information the CSP can share, how the information will be shared, and the limits within which the CSP can provide assistance. The CSP cannot share information about another customer or provide assistance that would affect another consumer's use of services. Your standard operating procedures (SOPs) should be updated to reflect collaboration with the CSP.

Looking Ahead

A common theme across these cloud security practices is the need for cloud consumers to develop a deep understanding of the services they are buying and to use the security tools provided by the CSP. Recent cloud security incidents reported in the press, such as unsecured AWS storage services or the Deloitte email compromise, would most likely have been avoided if the cloud consumers had used security tools, such as correctly configured access control, encryption of data at rest, and multi-factor authentication offered by the CSPs.

For small and mid-sized organizations, use of well-established, mature CSPs helps reduce risk associated with transitioning applications and data to the cloud.

We welcome your feedback on these practices in the comments section below.

Additional Resources

Read our first post in this series, 12 Risks, Threats, and Vulnerabilities in Moving to the Cloud.

Federal agencies can learn more about CSP-specific best practices and implementation guidance at fedramp.gov.

The Cloud Security Alliance works to promote the use of best practices for providing security assurance within cloud computing and provide education on the uses of cloud

The following papers include guidance on CSP-specific best practices and implementation guidance:

More In Cloud Computing

PUBLISHED IN

Cloud ComputingGet updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedMore In Cloud Computing

Get updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed