Prototyping for Developing Big Data Systems

There are several risks specific to big data system development. Software architects developing any system--big data or otherwise--must address risks associated with cost, schedule, and quality. All of these risks are amplified in the context of big data. Architecting big data systems is challenging because the technology landscape is new and rapidly changing, and the quality attribute challenges, particularly for performance, are substantial. Some software architects manage these risks with architecture analysis, while others use prototyping. This blog post, which was largely derived from a paper I co-authored with Hong-Mei Chen and Serge Haziyev, Strategic Prototyping for Developing Big Data Systems, presents the Risk-Based Architecture-Centric Strategic Prototyping (RASP) model, which was developed to provide cost-effective systematic risk management in agile big data system development.

Six Risks in Big Data Systems

As outlined in our paper, the risks inherent in big data system development stem from

- the five Vs of big data - volume, variety, velocity, veracity, and value

- paradigm shifts (such as Data Lake, Polyglot Persistence, Lambda Architecture, etc.) - The primary effort had been focused on coding in small data system development but now is focused on "orchestration" and technology selection in big data systems.

- the rapid proliferation and difficulty in selecting big data technologies - There are more than 150 products in the NoSQL space alone.

- rapid technology changes - While small data systems focus on data at rest or stored in a database, big data systems emphasize data in use and data in motion, which, for many architects, brings data management into new territory, such as NoSQL.

- the short history of big data system development - There are so many technologies and technology families, and most programmers have little, if any, experience in them.

- complex integration of new and old systems - Many big data systems need to integrate with legacy (small data) systems.

Three Parallel Streams to Manage Risk

We began our investigation by identifying three streams of activity that run in parallel, each of which helps software architects manage risk:

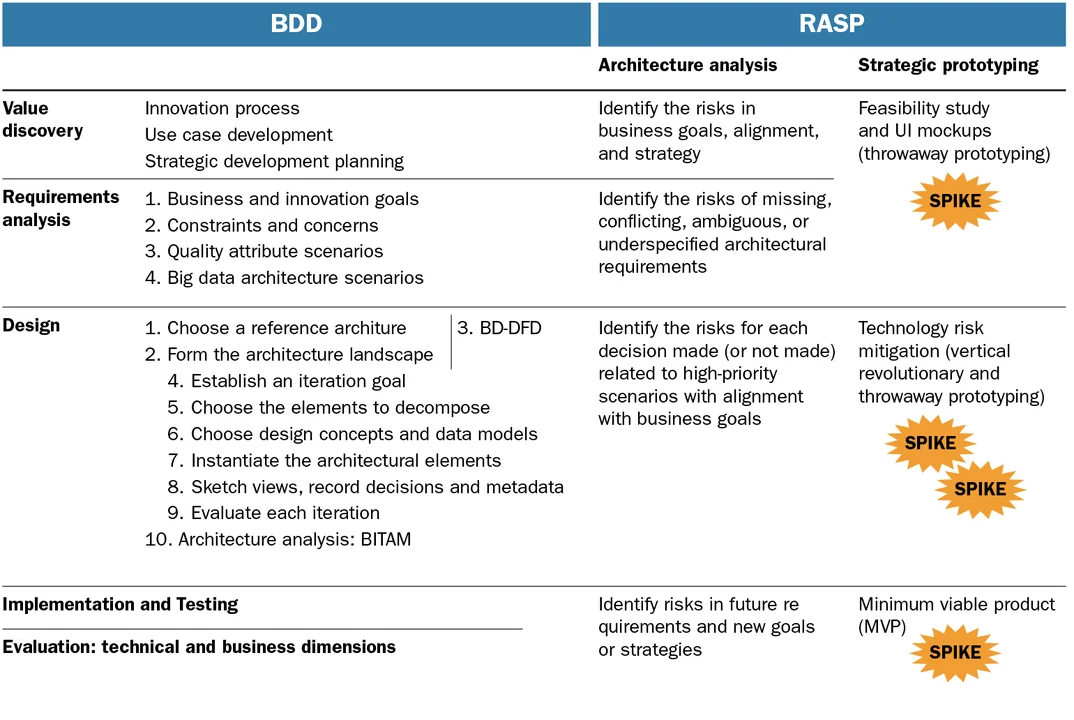

- architectural design - In this phase, which is the standard architecture-based software development lifecycle, software architects follow a variant of the Attribute-Driven Design (ADD) method, called BDD (Big Data Design). (I recently co-authored a book Designing Software Architectures: A Practical Approach, which provides the latest update of the ADD method).

- architecture analysis - Parallel to architectural design is a stream of architecture analysis activities, which involve analyzing the business goals, and the requirements that we derive from business goals, for completeness and consistency. During this phase, we can analyze the architectural decisions that we make. This analysis can typically be done using an Architecture Tradeoff Analysis Method (ATAM), but there other techniques can also do the job, including lightweight techniques, such as tactics-based analysis or reflective questions. Additionally, we can analyze the architectures of fielded systems as we did in previous work with Titan, a tool which, supports a new architecture model: Design Rule Spaces, as described in a previous post.

- strategic prototyping - This phase, which is the focus of this blog post, evolved out of the realization that architecture analysis doesn't always provide a complete picture. While architecture analysis offers detailed information about technology risks, it cannot provide complete guidance. For example, if an architect chooses a log management tool, is that tool sufficient to capture the volume of events that he or she needs to capture? Prototyping allows architects to verify a new big data component's real-time performance, or assess runtime resource consumption thresholds, is therefore necessary component of big data risk management.

There are three major approaches to prototyping:

- Throwaway prototyping, also known as rapid prototyping or proofs of concept, describes a process in which prototypes are rapidly developed and given to the end user to field test, but are not a part of the final delivered software.

- Vertical evolutionary prototyping, wherein one or more system components are developed with full functionality in each release.

- Minimum Viable Products (MVP), which are evolutionary prototypes that have only those features that allow product deployment, which minimizes the time spent on an iteration. An MVP emphasizes hypothesis testing by fielding the product with real users and collecting usage data. This data helps confirm or reject the hypothesis, leading developers make additional design decisions.

Cost (defined in terms of money, time, and effort) and effectiveness issues drove us to advocate strategic prototyping, but only in areas that aren't adequately addressed by architecture analysis. We call this technique "strategic" in that there are different prototyping techniques that may be employed and the cost/effectiveness ratio determines the choice of which technique to use, and when.

We created the Risk-Based Architecture-Centric Strategic Prototyping (RASP) model to provide explicit guidance to software architects on how to employ strategic prototyping for risk management. The design part of RASP is largely build on ADD, but is tailored for big data. As shown in the figure below, RASP builds on a version ADD called Big Data Design (BDD), which tailors ADD for architecture design for big data systems. BDD guides value discovery, requirements gathering and analysis, design, and the downstream activities of implementation, testing, and evaluation.

Prototyping decisions are critical in big data system development, so architects should make them in a consistent, disciplined way. Because technology orchestration is the core of big data system development, such development relies heavily on prototyping and architecture analysis for risk management. As we have shown, RASP can guide this prototyping and analysis.

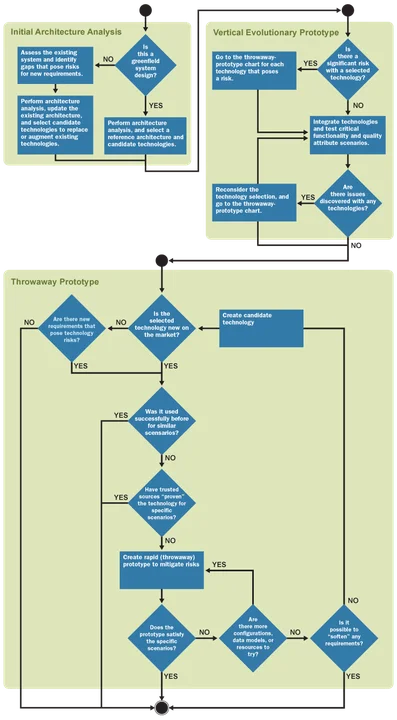

Based on our work with SoftServe, a software and application development and services company (see posts here and here), we developed questions that architects should answer and a decision flowchart (shown below) that software architects should use before embarking on any prototyping effort, since prototyping can be costly.

For example, the decisions baked into the flowchart focused on whether:

- the technology being assessed was new

- it had been successfully used before

- signiÂficant new requirements existed, and

- objective evidence substantiated product performance claims.

The answers to these questions helps an architect determine whether to prototype and how to interpret the prototyping results. For example, a prototype that reveals inadequacies of a technology might result in choosing a different technology, but another option is to soften some requirements.

We validated RASP via case studies of nine big data projects at SoftServe. These case studies exercised each of these kinds of decisions. In each case study, we applied RASP alongside BDD (and its design concepts catalog) to systematically design and manage risk. Because each project had different business and innovation goals, their risks differed. However, consistent risk themes emerged, such as technology selection, performance of new technologies, total cost of ownership, and integration of technologies.

In our case studies, employing standardized questions and decision procedures, as well as architecture analysis methods, helped us plan prototyping systematically and strategically.

7 Takeaways: When to do Prototyping and When to Do Architecture Analysis

On the basis of these case studies and our prior experience, we created and verified seven guidelines for when to do architecture analysis, when to prototype, and under what conditions to deploy each kind of prototype:

- Architecture analysis might be the only feasible option when you need to make early decisions that have a project-wide scope. Then, you can employ throwaway prototypes to make technology choices and demonstrate feasibility.

- Architecture analysis alone is insufficient to prove many important system properties.

- Architecture analysis complements vertical evolutionary prototyping. Analysis helps you select candidate technologies; prototyping validates those choices and quickly gets a working system in front of the stakeholder. This aids in eliciting feedback and prioritizing future development efforts.

- An evolutionary prototype can effectively mitigate risk if you implement it as a skeleton--an infrastructure into which you can integrate components and technologies. This helps mitigate integration risks, supports early demonstrations of user value, and aids the understanding of end-to-end scenarios.

- Vertical evolutionary prototyping can help answer questions about system-wide properties but might need to be augmented with throwaway prototypes when requirements are volatile. When requirements change, new architecture analysis and throwaway prototypes might be needed (to help falling into the "hammer looking for a nail" syndrome).

- Throwaway prototypes work best when you need to quickly evaluate a technology, to see whether it satisfies critical architecture scenarios (usually related to quality attributes such as performance and scalability). They are typically used for narrow scenarios that involve evaluating just one or two components.

- Whether to create an MVP is more of a business decision than a decision driven simply by technological risk. Typical business goals addressed include getting early user feedback and updating the product accordingly, presenting a working version to a trade show or customer event, structuring the development process, checking team progress and alignment, or testing an out-sourcing vendor using an MVP as a pilot project.

Wrapping Up and Looking Ahead

In 1996, Richard Baskerville and Jan Stage originally advocated risk analysis for controlling evolutionary prototyping. Our work expands on that recommendation. Our research has shown that an architecture-centric design methodology, such as BDD, makes risk management explicit, systematic, and cost effective. Architecture-centric design provides a basis for value discovery to help stakeholders reason about risks, planning, estimating cost and schedule, and for experimentation. The RASP model offers practical guidelines for strategic prototyping, combining architecture analysis and a variety of prototyping techniques.

Additional Resources

View our presentation, Strategic Prototyping for Developing Big-Data Systems, which was presented at the 2016 SATURN conference.

More By The Author

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed