A Taxonomy of Testing: What-Based and When-Based Testing Types

There are more than 200 different types of testing, and many stakeholders in testing--including the testers themselves and test managers--are often largely unaware of them or do not know how to perform them. Similarly, test planning frequently overlooks important types of testing. The primary goal of this series of blog posts is to raise awareness of the large number of test types, to verify adequate completeness of test planning, and to better guide the testing process. In the previous blog entry in this series, I introduced a taxonomy of testing in which 15 subtypes of testing were organized around how they addressed the classic 5W+2H questions: what, when, why, who, where, how, and how well. This and future postings in this series will cover each of these seven categories of testing, thereby providing structure to roughly 200 types of testing currently used to test software-reliant systems and software applications.

This blog entry covers the four, top-level subtypes of testing that answer the following questions:

- What-based testing: What is being tested?

- Object-Under-Test-based (OUT) testing

- Domain-based testing

- When-based testing: When is the testing being performed?

- Order-based testing

- Lifecycle-based testing

- Phase-based testing

- Built-in-Test (BIT) testing

After exploring the what-based and when-based categories of testing, this post presents a section on using the taxonomy, as well as opportunities for accessing it.

What-Based Testing

What-Based test types include all of the different types of testing that deal with the question "What is being tested?"

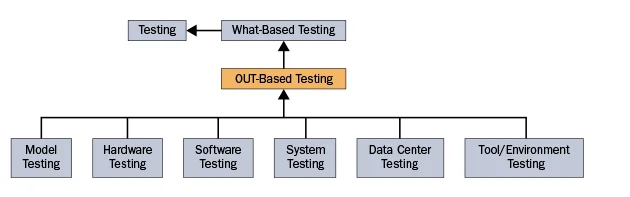

OUT-Based Test Types. The first subtype of what test types varies based on the type of object under test (OUT). Specifically, one can test

- executable requirements, architecture, and design models to uncover defects before the models are implemented

- hardware devices

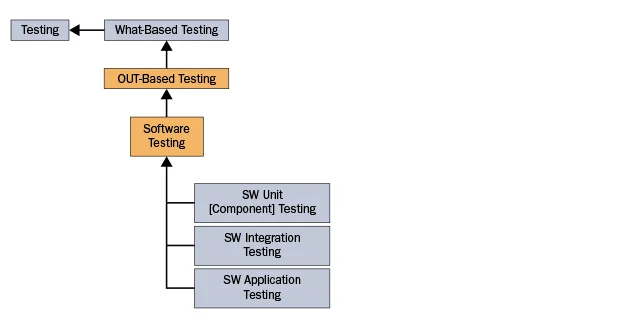

- software including unit testing, integration testing, and testing of the entire application

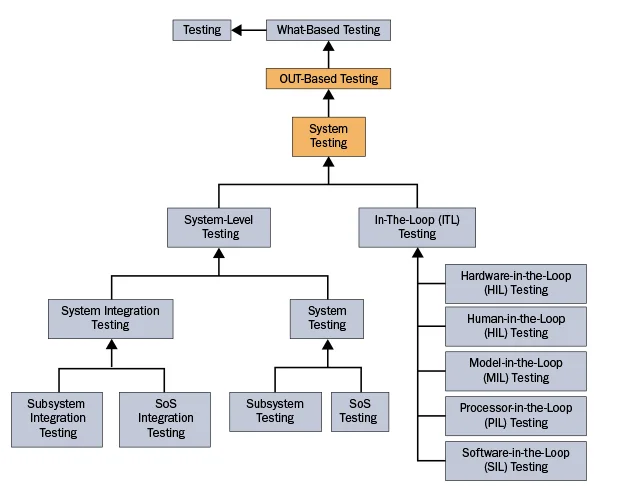

- systems (composed of hardware, software, personnel, procedures, etc.) including subsystem testing, system integration testing, system testing, system of system (SoS) integration testing, and SoS testing

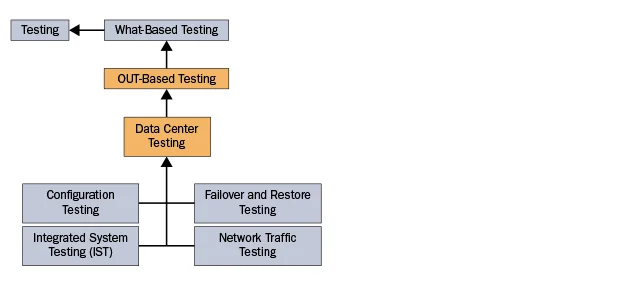

- data centers including testing to verify their configuration, their failover and restore capabilities, and their network traffic

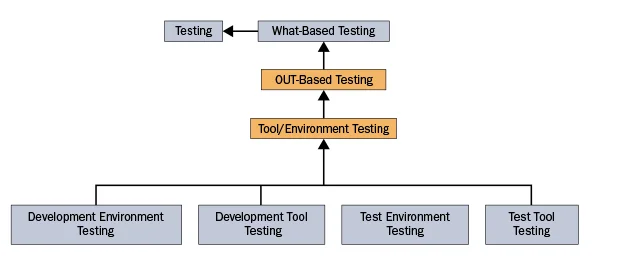

- development and test tools and environments

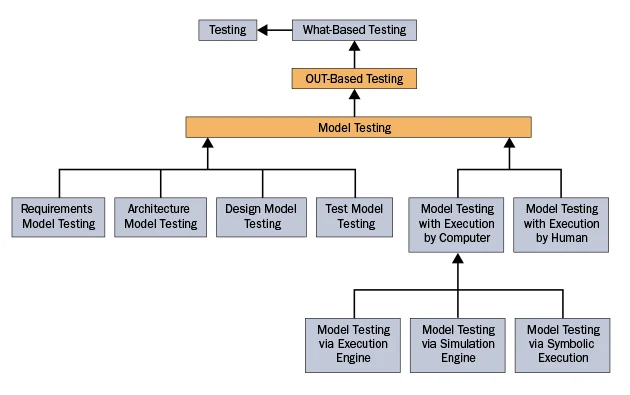

The following series of graphics document the different test types that are based on the type of object under test:

Model testing can be simultaneously decomposed two different ways: by the type of executable model under test and by the means by which the model is executed during testing. Specifically, one can potentially test any executable requirements, architecture, design, or test model including models written in either graphical or textual modeling languages. Typically during testing, these models are executed by an execution or simulation engine running on a computer. However, if such tools to execute the models and tests are unavailable, a person can manually execute them.

There are numerous types of hardware testing. Under OUT-based testing, five of the most commonly used hardware testing types associated with computer hardware are continuity testing, hardware stress testing, highly accelerated life testing, hardware qualification testing, and power-off testing:

The following diagram shows the three most common software-specific types of software testing: unit testing, integration testing, and application testing.

In addition to software, systems may also include hardware, data, documentation, personnel, manual procedures, facilities, and equipment. System testing includes subsystem testing, system integration testing, system testing, System of Systems (SoS) integration testing, and SoS testing. Unfortunately, the term system integration testing is sometimes used for testing the integration of subsystems into systems and sometimes for testing during integration of systems into systems of systems. System integration testing can be further decomposed into hardware-in-the-loop testing, human-in-the-loop testing, model in the loop testing, processor-in-the-loop testing, and software-in-the-loop testing.

Data Center Testing refers to the testing associated with a data center rather than a single software application that may be housed in the data center. Configuration testing determines whether the hardware and software making up the data center are properly configured (for example, to ensure security). Failover and restore testing ensures that when hardware or software fails, then the appropriate hot, warm, or cold failover occurs and then is properly rolled back when the failed components are restored to their proper states. Integrated system testing is the testing of a data center to ensure that its components have been properly installed, interoperate properly under normal and exceptional circumstances, and that supporting electrical and cooling subsystems function properly. Network traffic testing is the testing of one or more data center networks to determine whether the networks properly handle normal and excessive network traffic.

Testing of the software or system under test (SUT) can yield false positive and false negative results if there are defects in either the development tools, development environment(s), test tools, or test environment(s).

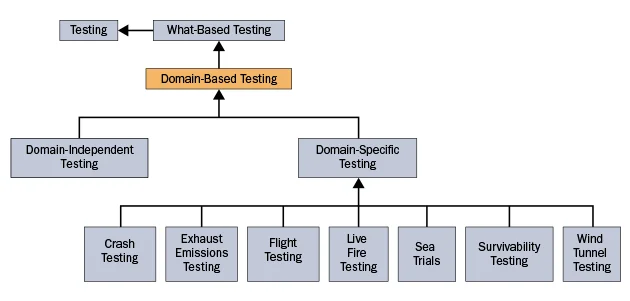

Domain-Based Testing. The second subtype of what test types varies based on whether the test type is domain-independent or domain-specific.

- Domain-independent testing includes all forms of testing that are not restricted to a single or small number of application domains.

- Domain-specific types of testing are equally numerous, although most testers will only deal with the small number of test types appropriate for the systems they are testing. For example, crash testing and exhaust emissions testing are representative of the automotive industry, whereas live fire testing and sea trials would be representative of naval ships and weapons systems.

When-Based Test Types

When-Based Test Types include all of the different types of testing that primarily deal with the question "When is the testing being performed?"

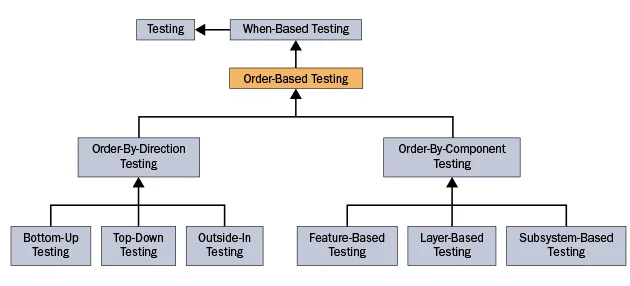

Temporal-Order-Based Test Types. The first type of when-based testing varies based on the temporal order of the testing, which typically matches the order in which the objects under test are developed. Specifically, one can perform:

- Order-by-Direct Testing. This type of testing is based on the general direction in which development (and testing) are performed. Waterfall testing includes the following three test types:

- Bottom-Up-Testing occurs when one starts by developing and testing the bottom-most units in terms of control flow and then works up toward the user-interface units.

- Top-Down Testing starts with the top-most units in terms of control flow (typically user-interface units) and works downward toward the middleware and operating system.

- Outside-In Testing starts with those units that interface with external actors and works its way in to the middle of the system under test.

- Order-by-Component Testing. This type of testing is based on the type of object under test. Waterfall testing includes the following three test types:

- Feature-Based-Testing occurs when the system is developed and tested in terms of its features.

- Layer-Based Testing develops and tests the system by architectural layers.

- Subsystem-Based Testing develops and tests a system in terms of its subsystems, potentially in parallel with different teams working on different subsystems.

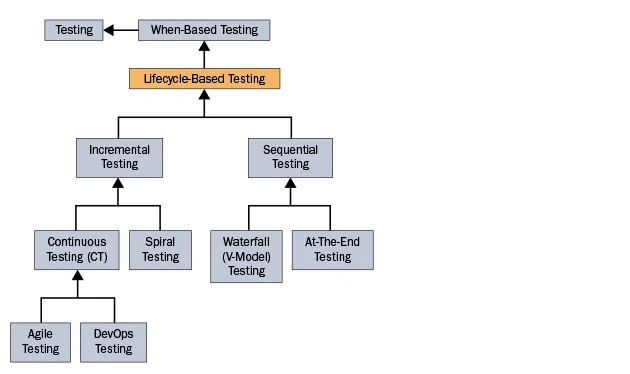

Lifecycle-Based Test Types. The first subtype of when test types varies based on the degree to which the associated lifecycle is evolutionary (i.e., incremental, iterative, and concurrent), which influences when and how often testing is performed. Specifically, one can perform:

- Sequential Testing. Sequential testing occurs when a sequential development cycle is used.

- Waterfall Testing assumes that the classic, strictly-sequential waterfall development cycle is used. V-Model Testing is based on the classic V-model, which bends the waterfall model in the middle to map the initial development activities (requirements engineering, architecture, design, and implementation) to their corresponding types of testing (unit, integration, and system testing). V-model testing assumes the standard bottom-up approach to testing (i.e., unit testing before integration testing before system testing).

- At-The-End Testing postpones all testing until the end of development. This testing model is an anti-pattern, so it is rarely seen.

- Incremental Testingis based on the realization that most systems and software programs are too big and complex to be developed and tested in a "big bang" manner.

- Spiral Testing is based on a system that is developed and tested in a relatively small number of mid-sized iterative increments.

- Continuous Testing (CT) is based on a much more evolutionary development cycle that includes numerous, short duration, iterative increments during which testing is essentially continuous. CT comes in the following two types:

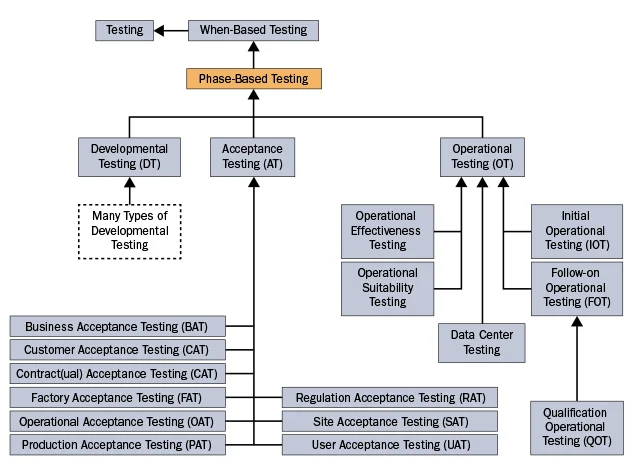

Phase-Based Test Types. The second subtype of when test types varies based on the phase in which testing occurs. Specifically, one can perform:

- Developmental Testing (DT). As with domain-independent test types, most of the test types in the taxonomy are performed during development, prior to the object under test being accepted by the acquisition, operations, or user organization.

- Acceptance Testing (AT). There are numerous types of acceptance testing based on such criteria as who is doing the acceptance, where the testing is taking place, and why it is being performed. Typically, each system only undergoes one or at most a few types of acceptance testing.

- Operational Testing (OT has two very similar though different definitions: (1) Operational testing is the testing of software or systems in their operational environment, an "identical" staging environment, or at least a very high-fidelity environment. (2) Operational testing is also the testing of the software or system once it has been fielded and placed into operation. For cyber-physical systems, most of the OT typically occurs before the low rate of initial production (LRIP) and full-scale production (FSP). Passing OT tests results in an authorization to operate. By using DevOps testing, some of the DT test results can be reused for OT. Some OT is also performed by system operators on an ongoing basis.

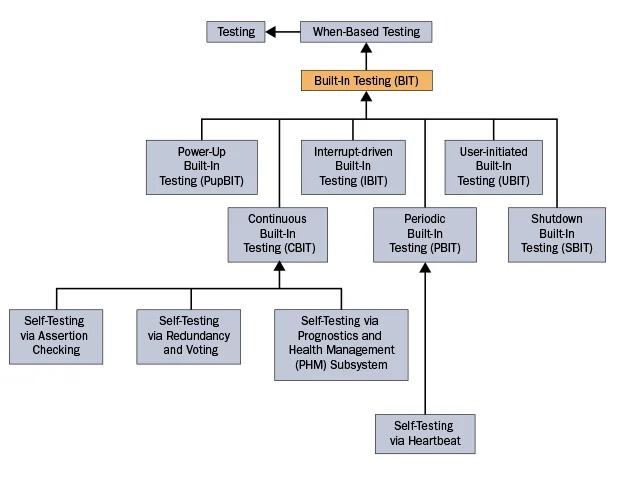

Built-In-Test (BIT)-Specific Test Types are the direct incorporation of test software into the deliverable system under test. This third subtype of when test types vary based on when the BIT tests execute. Specifically, BIT can occur as the system is being powered up, continuously in the background during system operation, when an event (often a fault or failure) occurs that

Using the Taxonomy

Because different types of testing uncover different types of defects and because many types of testing (as well as other verification and validation methods) are needed to ensure an acceptably low amount of residual defects, it is important that all potentially relevant types of testing are considered. This taxonomy of testing types can be used as a checklist to ensure that test planning includes all appropriate testing types for the object under test and thereby ensures that no important test types are accidentally overlooked. Those responsible for test planning, as well as other testing stakeholders, can essentially spider their way down all of branches of the taxonomy's hierarchical tree-like structure, constantly considering the questions "Is this type of testing relevant? Will using it be cost-effective? Do we have sufficient resources to do this type of testing? Should it be used across the entire system or only in certain parts of the architecture and under certain conditions?"

Another way the taxonomy can help is by enabling testers to use divide and conquer as a technique to attack the size and complexity of system and software testing in terms of the different types of testing. This taxonomy can help one see the similarities between related types of testing and make it easier to learn and remember the different types of testing.

Blog entries and conference presentations are too short to properly document such a rich ontology. For this reason, I am currently developing a wiki that clearly defines each testing type, describes its potential applicability and how to use it, discusses its pros and cons, states how it addresses each of the 5Ws and 2Hs.

In future blog posts, I will summarize the other major subclasses of this taxonomy. The next post in the series will explore the testing types in the taxonomy related to the questions where is the testing being performed and why is the testing being performed.

Additional Resources

On September 9, 2015 from 12:30 to 2:30 p.m. ET, I will presented an SEI Webinar on A Taxonomy of Testing Types. To view the webinar, please click here.

In the meantime, I will be presenting this taxonomy at next month's 10th Annual FAA Verification and Validation Summit and giving a more detailed tutorial on it at the SEI's Software Solutions Conference 2015 at the Hilton Crystal City in Arlington, Va., on November 16-18. For more information about the conference, please click here.

I have authored several posts on testing on the SEI blog including a series on common testing problems, a post presenting variations on the V model of testing, and, most recently, shift-left testing.

More By The Author

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed