Managing Static Analysis Alerts with Efficient Instantiation of the SCAIFE API into Code and an Automatically Classifying System

Static analysis tools analyze code without executing it to identify potential flaws in source code. Since alerts may be false positives, engineers must painstakingly examine them to adjudicate if they are legitimate flaws. Automation is needed to reduce the significant manual effort that would be required to adjudicate all (or significantly more of) the alerts. Many tools produce a large number of alerts with high false-positive rates. Other tools produce alerts for only a limited set of code flaws that have a very low rate of false positives; while this reduces the rate of false positives, real code flaws remain in codebases, despite the absence of alerts about them. The SEI Source Code Analysis Integrated Framework Environment (SCAIFE) application programming interface (API) defines an architecture for classifying and prioritizing static analysis alerts. In this blog post, we describe new techniques that help users instantiate SCAIFE API methods and integrate them with their own tools.

Covering more code-flaw types takes more development (and sometimes research) effort, so most tools provide alerts for only a subset of known code-flaw types. Previous analyses have shown that commonly used static analysis tools' coverage of code flaws is only partially overlapping, so multiple tools are often used to gain analysis of more code flaws. However, the use of multiple tools compounds the problem of having too many alerts that must be adjudicated manually as true or false.

The increasingly common standard DevOps practice of continuous integration (CI) results in frequent updates to working code. This practice generates a proliferation of static analysis alerts that can be hard for engineers to manage in the short time frames required by CI.

SCAIFE enables engineers to apply resources to the most critical issues identified by static analysis tools. In particular, it considers classifier-derived confidences and an organization's mathematically defined priorities related to characteristics of flaws and related code that they want to address first.

The SCAIFE architecture is designed so that a wide variety of static analysis tools, classification and related active-learning/adaptive heuristics and hyper-parameter optimization, static analysis project-data aggregation systems, prioritization methods, and registration modules can integrate with the SCAIFE system using an API. This API can be used by organizations that develop or research tools, aggregators, and frameworks for auditing static analysis alerts. The techniques I describe in this post allow users' tools to send and receive SCAIFE API calls.

Benefits of SCAIFE

The SCAIFE system provides an architecture with APIs and an open-source prototype system. Benefits to users include the following:

- Analysts with no knowledge of machine learning can quickly use automated classifiers for static analysis alerts. The classification system requires no labeled audit archive in advance since it uses test suites in a new way. Moreover, it performs active learning, so users needn't create their own frameworks to use the classifiers.

- Analysts and organizations can quickly apply formulas that prioritize static analysis alerts by using factors that are important to them. These prioritization formulas can combine various fields, including classifier-derived confidence, with mathematical operators.

- Developers and researchers can employ the API definition to extend the original prototype system, enabling the use of additional flaw-finding static analysis (FFSA) tools and code-metrics tools (such as CCSM or lizard), adaptive heuristics, classification techniques, etc.

About SCAIFE

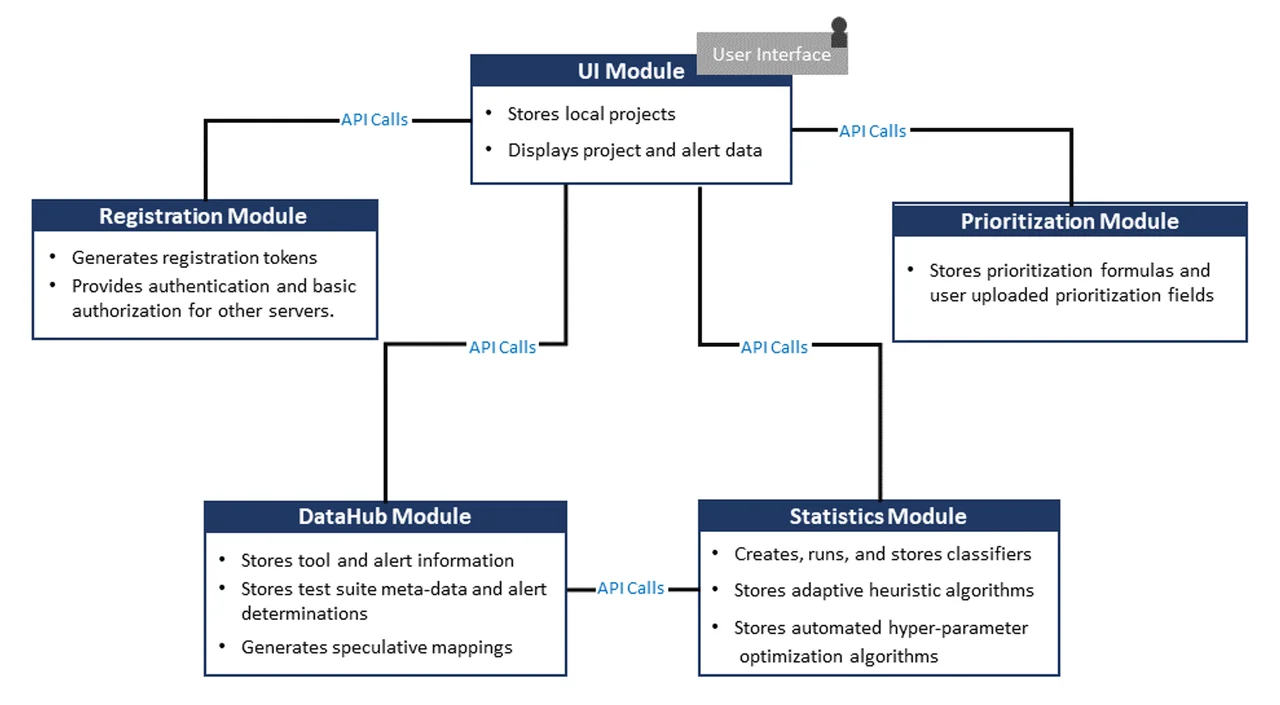

The SCAIFE architecture includes five servers and supports static analysis meta-alert classification and prioritization for a wide variety of tools, as shown in Figure 1. The system is modular so that each module can be instantiated by different tools or software while the overall system maintains the same functionality.

The following is a summary of the modules shown in Figure 1:

- The UI module has a graphical user interface (GUI) front end that enables display of flaw-finding static analysis (FFSA) alerts and stores local projects. The SCAIFE architecture enables a wide variety of FFSA and alert-aggregator tools to obtain classification and prioritization functionality by interacting as a UI module with the rest of the SCAIFE system. To provide this capability, these tools must instantiate UI module API calls to the other servers.

- The DataHub module stores data (tool, alert, project, test-suite metadata, adjudications True or False, etc.) from one or more UI modules and adjudicates some meta-alerts.

- The Statistics module creates, runs, and stores classifiers and adaptive heuristic (active-learning) algorithms and automated hyper-parameter algorithms.

- The Registration module is used for authentication and access control. It generates registration tokens, plus it provides authentication and basic authorization for other servers.

- The Prioritization module stores prioritization formulas and user-uploaded prioritization fields.

Instantiating SCAIFE API Calls

Users can instantiate SCAIFE API calls from their own static analysis tools or frameworks. They can integrate their tools with the SCAIFE system by instantiating SCAIFE API calls from their tools. Each tool can interact with the other active SCAIFE containers when it makes or responds to SCAIFE API calls.

A new manual we have published, How to Instantiate SCAIFE API Calls: Using SEI SCAIFE Code, the SCAIFE API, Swagger-Editor, and Developing Your Tool with Auto-Generated Code, explains how to start up the SCAIFE Docker containers on the user's machine, view the SCAIFE API within the third-party, open-source Swagger-Editor tool, and test API calls to the active containers via the Swagger-Editor. This manual also enables users to learn

- which Linux curl commands are used for the API call

- how to auto-generate client code used within the user's own tool code (in any of a wide variety of languages)

- how to use a set of useful commands for development and testing while implementing SCAIFE API calls.

Developers can use these techniques to enable their tools (when configured as servers) to receive SCAIFE API calls and to make SCAIFE API calls (when configured as clients). In both cases, they need to develop some internal SCAIFE-required logic in their tools. The modular SCAIFE system enables substitution of any of the five types of SCAIFE modules shown in Figure 1.

Access to SEI SCAIFE System Code

The SCAIFE manual is useful whether users have full, partial, or no access to SCAIFE system code that we developed at the SEI. The manual will be used in different ways, however, depending on the extent of the user's access to that code.

- Full: To do everything described in the manual, the user needs SCAIFE system code that the SEI developed, which fully instantiates the SCAIFE API for all five SCAIFE modules, including internal logic. We can currently send SCAIFE code just to DoD organizations. DoD organizations interested in the code can send a request to info@sei.cmu.edu and we will send the full SCAIFE code.

- Partial: In September 2020, we will publicly release our research version of the Secure Code Analysis Lab (SCALe) tool, with many features added that enable it to work as the SCAIFE UI module, as well as a standalone tool. (https://github.com/cmu-sei/SCALe on the scaife-scale branch. Note this is not the default main branch.) That release will give developers who cannot receive the full SEI-developed SCAIFE system code the ability to conduct tests with one of the five SCAIFE modules. The UI module is a complex system; the new version of the SCALe tool will help external SCAIFE developers with initial testing and development, even if they eventually replace the UI module with their own tool. The Priority and Registration modules are simpler and would take developers less effort to instantiate.

- None: Developers who do not have access to any of the SCAIFE system code can use some of the recommendations in the manual. For example, they can auto-generate SCAIFE client code and server code in whatever languages their own tools are written. (Using the formally defined SCAIFE API, the technical manual provides details about how to use Swagger-Codegen to auto-generate server and client code in a wide variety of popularly used languages.) In their initial testing between modules, they must instantiate a server and begin testing, especially for integration testing between servers. The instructions in the manual tell users to start up all five SCAIFE modules and populate a project in the SCAIFE DataHub. After that, the manual tells them to interact via the Swagger-Editor with the active Registration and DataHub modules, as preparation to do this from their own tools. Users must upload new static analysis tool output to the DataHub module for the SCAIFE project, which was previously uploaded on the DataHub. To begin their testing, these developers will need to instantiate at least some code mockups and return data from at least one server. Eventually, they will need to instantiate each of the SCAIFE module servers.

Instantiating SCAIFE Modules: Use Cases

We expect the UI module to be the most common SCAIFE module that developers will want to instantiate, in this order of priority:

- UI module--This module is where the static analysis tool/framework sits. For example, we use a research version of the SEI SCALe static analysis framework for this module in the instantiated versions of the SCAIFE architecture that we have released. There are benefits for static analysis tool or framework developers or users to integrate their tools with the modular SCAIFE system. In particular, with relatively small effort, they can enable their static analysis tools to use automated machine-learning classifiers for static analysis meta-alerts. They can also use sophisticated prioritization schemes for static analysis meta-alerts, all while still using tools that they prefer.

- DataHub module--This module stores SCAIFE projects and packages, and various data structures used as part of these projects and packages. We designed this module to accept data from multiple UI modules. Organizations that want to optimize the use of particular data structures (e.g., to optimize for performance or scalability, or to reuse their organizations' data archives) may wish to instantiate the DataHub module.

- Statistics module--This module performs classification, active learning, and hyper-parameter optimization, and stores classification schemes related to particular SCAIFE projects and associated DataHub and UI modules for these projects, including optional adaptive heuristics (also known as active-learning algorithms) and hyper-parameter optimization techniques. Some static analysis framework tools, such as SWAMP-in-a-box, include both a GUI front end like the SCAIFE UI module and a database back end for aggregating project and package data like the SCAIFE DataHub module. SWAMP also does its own registration. In this case, users can simply consider the SCAIFE DataHub, UI, and Registration modules as a single consolidated module and instantiate API calls only into or out of the consolidated module (i.e., instantiating calls to or from the Prioritization and Statistics modules).

- Prioritization module--This module stores meta-alert prioritization schemes, with permission information related to the SCAIFE project and the uploading organization name.

- Registration module--This module handles user and inter-server authentication.

How to Get Started With the API

Most developers of flaw-finding static analysis (FFSA) tools and alert-aggregator frameworks (such as SCALe and SWAMP-in-a-box) will be interested in the UI module's API definition. To enable their tool to interact with the SCAIFE system, their tool needs to instantiate the UI module's API.

Some researchers/developers, however, will focus on the Statistics module's API to improve classification, active learning, and automated hyper-parameter optimization. If they are collaborating with the SEI on research, they can develop new algorithms and modularly incorporate them within the full SCAIFE-system prototype we have developed. If not, they can simply modify their own tools to instantiate the Statistics module's API and then interact with a SCAIFE system with other modules developed by various sources (e.g., for a UI module they could use the version of SCALe that we developed to work modularly with SCAIFE from https://github.com/cmu-sei/SCALe/tree/scaife-scale but they could either develop their own modules for the remaining three SCAIFE modules or use modules developed by others such as collaborators or, we hope in the future, open-source projects shared publicly for all the SCAIFE modules).

Similarly, some researchers or developers want to improve performance, security, resilience, and scalability of aggregated--and what is eventually expected to be large--data storage. Those users will therefore want to focus on the DataHub module's API. We expect a smaller number of researchers and developers to implement the Registration or Prioritization modules in research projects focused on those. These APIs will still be useful to review and implement, however, because other servers need to interact with them, whether in a client or a server role.

Looking Ahead and Next Steps

One of our DoD collaborators plans to begin instantiating API calls soon. Our new manual is also intended to help additional collaborators that are interested in integrating their tools with the SCAIFE system. We welcome additional DoD organizations that wish to collaborate with us to contact us at info@sei.cmu.edu.

Although the manual is most useful for readers who can currently access the SCAIFE code, the manual also can help non-DoD users understand how the API calls can be implemented efficiently without requiring SCAIFE code, with a partial set of implemented SCAIFE code (SCALe module only), and with the fully implemented SCAIFE code (regarding the latter, we hope to eventually make this publicly available). These users can also learn how to test their own servers if they instantiate SCAIFE API servers and then use the described methods, e.g., using the publicly available SCALe (scaife-scale branch) server with a new Registration server that they instantiate.

We expect that our September public release of the research version of SCALe (https://github.com/cmu-sei/SCALe/tree/scaife-scale) will promote broader adoption of the SCAIFE API and enable more users to process and manage their static analysis alerts successfully.

Additional Resources

Read the technical manual (and we hope you will follow the steps to test and instantiate SCAIFE code!): How to Instantiate SCAIFE API Calls: Using SEI SCAIFE Code, the SCAIFE API, Swagger-Editor, and Developing Your Tool with Auto-Generated Code.

Examine the YAML specification of the latest published SCAIFE API version on GitHub

Download the scaife-scale branch of SCALe: https://github.com/cmu-sei/SCALe/tree/scaife-scale

Read the SEI white paper, SCAIFE API Definition Beta Version 0.0.2 for Developers.

Read the SEI technical report, Integration of Automated Static Analysis Alert Classification and Prioritization with Auditing Tools: Special Focus on SCALe.

Learn more about using automation to prioritize alerts from static analysis tools.

Review the 2019 SEI presentation, Rapid Construction of Accurate Automatic Alert Handling System.

Read other SEI blog posts about SCALe.

Read other SEI blog posts about static analysis alert classification and prioritization.

Watch the SEI webinar, Improve Your Static Analysis Audits Using CERT SCALe's New Features.

Read SEI press release, SEI CERT Division Releases Downloadable Source Code Analysis Tool.

Read the SEI blog post, Test Suites as a Source of Training Data for Static Analysis Alert Classifiers.

Read the Software QUAlities and their Dependencies (SQUADE, ICSE 2018 workshop) paper, Prioritizing Alerts from Multiple Static Analysis Tools, Using Classification Models.

Read the SEI blog post, Prioritizing Security Alerts: A DoD Case Study. (In addition to discussing other new SCALe features, it details how the audit archive sanitizer works.)

Read the SEI blog post, Prioritizing Alerts from Static Analysis to Find and Fix Code Flaws.

View the presentation, Challenges and Progress: Automating Static Analysis Alert Handling with Machine Learning.

View the presentation (PowerPoint), Hands-On Tutorial: Auditing Static Analysis Alerts Using a Lexicon and Rules.

Watch the video, SEI Cyber Minute: Code Flaw Alert Classification.

View the presentation, Rapid Expansion of Classification Models to Prioritize Static Analysis Alerts for C.

View the presentation, Prioritizing Alerts from Static Analysis with Classification Models

Read the SEI paper, Static Analysis Alert Audits: Lexicon & Rules, presented at the IEEE Cybersecurity Development Conference (IEEE SecDev), which took place in Boston, MA on November 3-4, 2016.

Read the SEI paper, SCALe Analysis of JasPer Codebase.

Read the SEI technical note, Improving the Automated Detection and Analysis of Secure Coding Violations.

Read the SEI technical note, Source Code Analysis Laboratory (SCALe).

More By The Author

Release of SCAIFE System Version 2.0.0 Provides Support for Continuous-Integration (CI) Systems

• By Lori Flynn

Release of SCAIFE System Version 1.0.0 Provides Full GUI-Based Static-Analysis Adjudication System with Meta-Alert Classification

• By Lori Flynn

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed