Automated Code Generation for Future Compatible High-Performance Graph Libraries

PUBLISHED IN

Artificial Intelligence EngineeringFor many DoD missions, our ability to collect information has outpaced our ability to analyze that information. Graph algorithms and large-scale machine learning algorithms are a key to analyzing the information agencies collect. They are also an increasingly important component of intelligence analysis, autonomous systems, cyber intelligence and security, logistics optimization, and more. In this blog post, we describe research to develop automated code generation for future-compatible graph libraries: building blocks of high-performance code that can be automatically generated for any future platform.

Graph analytics and other data-intensive applications are characterized by unpredictable memory access and low computation intensity. Called "irregular algorithms," these applications are hard to implement efficiently even on today's high-performance computing architectures. Rapid advances in hardware architectures add to the difficulty, making it necessary to re-implement and re-tune algorithms--a process that can be as onerous as repeating the entire development cycle--to achieve the best performance for each new platform.

At the SEI's Emerging Technology Center, we work in rapidly advancing technologies to make recent innovations practical for missions. One of our areas of focus is advanced computing. In particular, we are currently working to automate code generation of high-performance libraries of graph algorithms tuned for a few targeted hardware architectures to establish a foundation for future automated code generation on a wider range of systems.

To date, the graph research community has made limited progress in applying automated code generation to irregular algorithms due to the lack of appropriate algorithmic abstractions. Recent advancements in specifying primitive algorithm building blocks in terms of sparse linear algebra finally make the application of code generation technologies for irregular algorithms possible.

Why Graph Algorithms?

Heterogeneous high-performance computing is becoming increasingly complex, given the federal government's emphasis on exascale. On a parallel front, data-intensive computing and graph algorithms are hard to program efficiently on current architectures due to their unpredictable memory access and low computation intensity. Given the trend in exascale computing, programming these systems will become increasingly hard.

Graphs are, at their core, a fundamental mathematical concept that underlies many computational and data-intensive tasks, such as the detection of patterns of behaviors and relationships. Graph algorithms are relevant in a number of areas of importance to the DoD, including

- Intelligence, Surveillance, and Reconnaissance (ISR). Graphs represent entities and relationships detected through a variety of intelligence sources and can help identify anomalous patterns of life.

- Social Network Analysis. Graphs represent relationships between individuals or documents (between 10,000 - 10,000,000 individuals and interactions), allowing government analysts to identify hidden social networks.

- Cybersecurity. Graphs help government analysts identify cyber attacks or malicious software by representing communication patterns of computers on a network during billions of network events.

The amount of data collected continues to grow exponentially, making computations much harder to manage. At the same time, the hardware space has become more complex. Every time a new hardware architecture becomes available, developers and graph experts must first become experts in the hardware and figure out how to create the data structures and the sequence of operations that will run well on these platforms.

To address these issues, we are working to separate the complexity of graph algorithm development from the complexity of the underlying hardware systems. Our work in this area has resulted in several efforts with external collaborators, including the GraphBLAS forum, a worldwide consortium of graph research experts, to develop primitives (i.e., data structures and operations) in this space. That work has also led to our contributions to an open-source reference implementation of these primitives in the GraphBLAS Template Library (GBTL).

Write Once, Run Everywhere

Today, if you want to develop high-performance graph analytics, you must be an expert in graph algorithms, as well as an expert in hardware. It can be hard to find those skillsets in one member of a team and even harder to find a team of experts with these skills.

If we achieve a separation of concerns--where graph experts can program their applications using higher-level math abstractions and leverage another team's hardware expertise--we can develop software for high-performance graph libraries much more easily. Our work reinforced the overall mission of the GraphBLAS forum: allow graph experts to write once, run everywhere, and run fast.

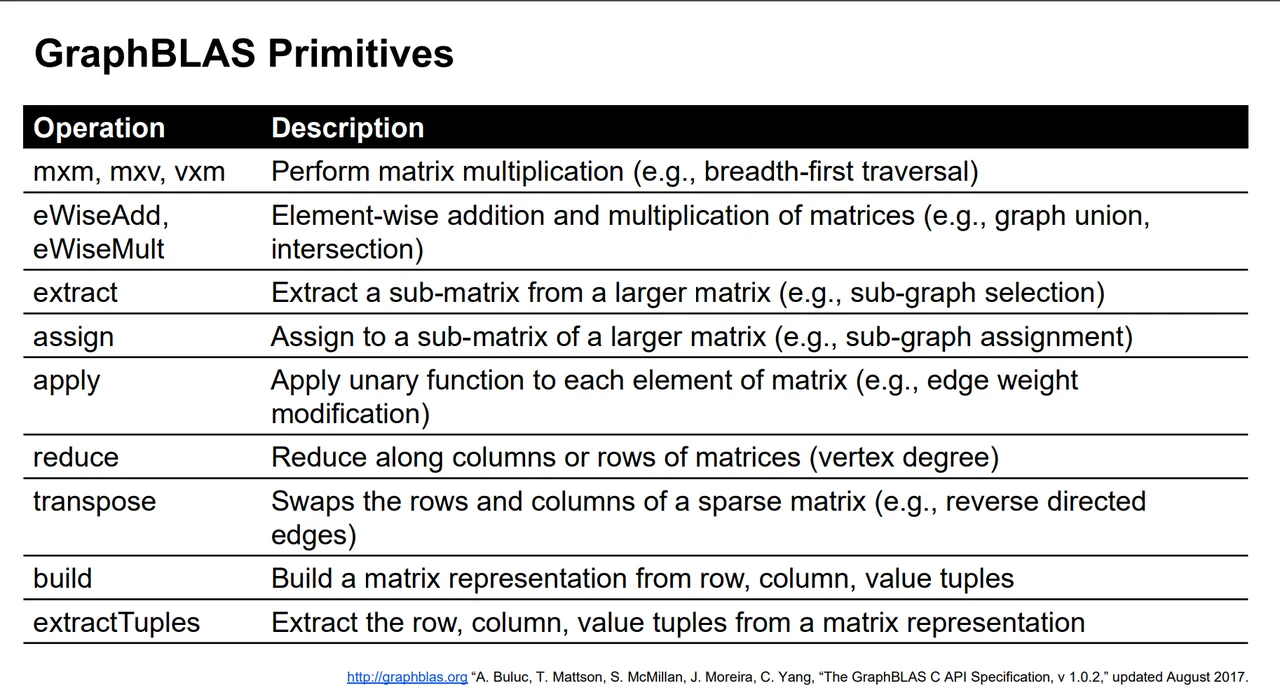

We developed an interface that has nine types of primitives from which many graph algorithms can be written. The GraphBLAS Application Programming Interface (API) Specification, which defines these primitives, was released in May 2017 and can be found at graphblas.org.

Automated Code Generation

With primitives established and graph algorithm developers writing at a higher level, our current research focuses on automatic generation of high-performance implementations of these primitives for arbitrary hardware--past, present, or future. Using formal specifications of hardware capabilities, Carnegie Mellon University's Spiral code generation technology can already automatically generate high-performance signal-processing codes.

Working with Franz Franchetti, a professor in the Department of Electrical and Computer Engineering at Carnegie Mellon University and a leader of the Spiral project, we are expanding Spiral's automated code generation technology to use mathematical formalization of the GraphBLAS primitives to automatically generate the high-performance graph applications needed for targeted hardware platforms. Our other collaborators Tze Meng Low and James Hoe (Carnegie Mellon University Department of Electrical and Computer Engineering) are Spiral team members, bringing expertise in reconfigurable computing and high-level hardware description and synthesis. With Franchetti, they are performers on a number of related DARPA programs.

Looking Ahead

To evaluate our work and make it more widely accessible, we are targeting multiple benchmark applications in the graph analytics space, including the Graph 500 benchmark (run twice a year) and the Static Graph Challenge to be held again at this year's IEEE High Performance Extreme Computing Conference.

Our work advances the state of the art in code generation by introducing concepts needed to capture graph algorithm primitives, including a parameterization of the structure of sparse data to produce highly tuned codes for irregular algorithms. This process targets a user-specified hardware platform and will be used to develop codes for systems ranging from small FPGA-accelerated systems to large-scale supercomputers.

The next step in our work is to enhance the code generation system to also select appropriate hardware: based on constraints like cost, size, weight, and power, the system will select the appropriate hardware from available COTS components, all while generating high-performance code for the selected hardware. This process, referred to as hardware-software co-optimization, is the next step to achieving the ultimate goal of co-synthesis where the hardware would be completely designed. These goals are aligned with a number of past DARPA programs such as Building Resource Adaptive Software Systems (BRASS) and Power Efficiency Revolution for Embedded Computing Technologies (PERFECT), and new efforts like DARPA's Software-Defined Hardware (SDH).

We welcome your feedback in the comments section below. If you have questions or feedback on this work, please don't hesitate to contact us at info@sei.cmu.edu.

Additional Resources

View a program of the 2017 Research Review, which included a presentation of this work.

More By The Author

More In Artificial Intelligence Engineering

PUBLISHED IN

Artificial Intelligence EngineeringGet updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedMore In Artificial Intelligence Engineering

Get updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed