Six Best Practices for Securing a Robust Domain Name System (DNS) Infrastructure

PUBLISHED IN

Situational AwarenessThe Domain Name System (DNS) is an essential component of the Internet, a virtual phone book of names and numbers, but we rarely think about it until something goes wrong. As evidenced by the recent distributed denial of service (DDoS) attack against Internet performance management company Dyn, which temporarily wiped out access to websites including Amazon, Paypal, Reddit, and the New York Times for millions of users down the Eastern Seaboard and Europe, DNS serves as the foundation for the security and operation of internal and external network applications. DNS also serves as the backbone for other services critical to organizations including email, external web access, file sharing and voice over IP (VoIP). There are steps, however, that network administrators can take to ensure the security and resilience of their DNS infrastructure and avoid security pitfalls. In this blog post, I outline six best practices to design a secure, reliable infrastructure and present an example of a resilient organizational DNS.

Remote Infrastructures

In my role as a researcher on CERT's Network Situational Awareness Team (see the recent post Distributed Denial of Service Attacks: Four Best Practices for Prevention and Response), I often work with DNS data as a means to enhance analysts' ability to rapidly respond to emerging threats and discover hidden dangers in their infrastructure.

As organizations migrate, in whole or in part, to cloud-based services, traditional on-site computing infrastructure is relocated to remote sites typically under control of a third party. Too often in the wake of an attack, the victim organization finds itself unable to reliably resolve the information needed to access that remote infrastructure, leaving it unable to conduct business. When you can't reach your DNS servers, or trust the information they provide, your business grinds to a halt.

In addition to providing IP addresses for Internet domain names, DNS provides essential security information for an organization's network (e.g., records designed specifically to aid in the prevention of spam and phishing, or records designed to ensure the integrity of the information provided by a DNS server). Too often, computer security efforts focus on edge and endpoint-based security measures that center on log analysis. It is important to remember that DNS queries and responses can be a rich source of security-related information regarding activity on your network. DNS records themselves provide a wealth of information about an organization's infrastructure, to both legitimate users and potential attackers.

While some may argue that the lack of DNS visibility to the average user is one source of its elegance, others might argue that it allows bad actors to escape detection. One interesting trend that I have seen is that some administrators, typically for performance reasons, will disable query logging on their nameservers. This practice is unfortunate because DNS logging is a primary tool that an organization can use to detect a bad actor wreaking havoc on its DNS infrastructure or in other parts of the environment.

Besides logs, monitoring DNS queries and responses from network traffic itself is a useful strategy for security analysts. For example, monitoring typical traffic volume to and from an organization's DNS servers can help analysts quickly identify denial of service attacks leveraging their nameservers.

DNS Best Practices

Consider these best practices when designing a secure, reliable DNS infrastructure:

- Only make available what must be available. One of the first things that organizations can do is to ensure that only the information necessary for the parties using the server is available on the server. If you have domain names that must be resolved by the general public, only those servers responsible for that function, and only that data, should be accessible to the general public. All other DNS servers and all other DNS data should be restricted to internal access only. Publicly accessible servers should be authoritative-only; they should not act recursively. Individuals outside your organization do not need to use your recursive nameservers. Those individuals should be using the nameservers provided for use by their internet service provider (ISP).

- Ensure availability. Any DNS server should be part of a high-availability (HA) pair or cluster so that if one fails, others will be able to assume the load. Inclusion in a high-availability cluster ensures continuous availability for any DNS server resource, whether it is a primary or secondary nameserver, recursive or authoritative. In the case of publicly-accessible servers an organization should provide geographically diverse servers in their domain name registration to ensure against physically localized events, as well as routing diversity--preferably from providers with unique Autonomous System Numbers (ASNs)--to protect against large-scale denial-of-service attacks that may affect a provider's ability to serve your DNS data.

- Primary servers should be hidden. The servers that host the master copy of any zone should be hidden primaries. That means that they only exist to serve that data to the secondary nameservers throughout the organization; they are not listed as nameservers for any zone, and they are not accessible to any end-user. The secondary nameservers are those meant to answer queries; primary nameservers should neither accept nor respond to DNS queries from end-users. This helps to ensure the integrity of the DNS data by limiting access to the primary nameservers to just those individuals responsible for the maintenance of the servers and the data that resides on them. In the case of externally available nameservers, the primaries should be behind a firewall, with appropriate firewall rules in place to ensure only the secondaries are allowed to perform queries and transfers from the primaries.

- Think locally. Whenever possible, use nameservers that are local to an organization's users. For example, an organization with multiple branch or regional offices should have both recursive and authoritative nameservers on-site to serve those locations, rather than relying on a single set of nameservers at headquarters. This distributes query load across multiple servers and helps ensure that names are resolved as quickly as possible. A typical web page may contain dozens or even hundreds of linked resources, each of which may require a DNS lookup in order for the page to load. High latency between the end-user and the assigned nameserver can cause unnecessary delays in load times, and may increase help-desk load.

- Restrict access. Zone transfers should be protected by on-server access control lists (ACLs) and transaction signatures (TSIGs) as well as firewall ACLs. Primary nameservers should only be accessible to those employees responsible for their maintenance and upkeep, not just through privileged account management, but by limiting connections to the nameservers from only the hosts used by those employees. Secondaries should deny all zone transfer requests. If nameservers are serving authoritative data, they should not also be serving as recursive servers. This--nameservers serving authoritative data that are not also serving as recursive servers--helps ensure availability by limiting their attack surface. All traffic to the nameserver should be restricted via ACLs on the server itself, as well as firewall-based ACLs. These ACLs limit traffic to the server, preventing certain classes of attacks, such as denial-of-service attacks, while ensuring that traffic that does reach the server is authorized to use the service.

- Protect data integrity. Whenever appropriate, but particularly with publicly-accessible zone data, Domain Name System Security Extensions (DNSSEC) should be implemented to ensure the integrity of the data being served. DNSSEC digitally signs DNS data so nameservers can ensure its integrity prior to providing it in answers to queries. Full deployment of DNSSEC will help ensure end-users are connecting to the actual website or other service corresponding to a particular domain name, according to The Internet Corporation for Assigned Names and Numbers (ICANN). This verification takes place through public key infrastructure (PKI): digital certificates from the root server to the nameserver form a chain of trust between the very top of the DNS tree and the lowest end nodes (i.e., the end-user's nameserver).

Example of a Reliable, Secure, DNS Infrastructure

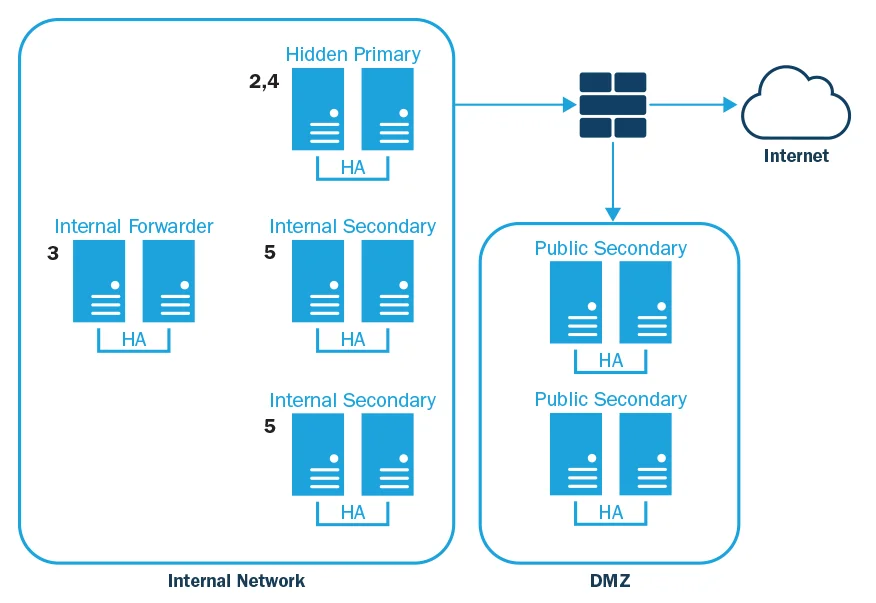

The diagram below is an example of a secure, resilient DNS infrastructure, representative of any organizational division that has direct access to the Internet.

- Publicly-available HA secondaries in DMZ. Only accept queries from external IPs.

- External hidden HA primaries behind internal DMZ firewall. Only accept queries and transfers from public secondaries. Implement appropriate ACLs and require TSIGs for zone transfers. Only accessible remotely by administrators from a small, known set of internal IPs.

- Internal HA forwarder designed to accept queries from other internal nameservers for external names they cannot resolve themselves. Inside the firewall, inaccessible from the Internet (ensured via ACLs).

- Internal hidden HA primaries. Only accept queries and transfers from internal secondaries, and updates from authorized DHCP servers, and any Active Directory servers in use. Implement appropriate ACLs and require TSIGs for zone transfers. Only accessible remotely by administrators from a small, known set of internal IPs.

- Internal HA secondaries. Only accept queries from internal IP ranges. Should be two HA sets per region and/or branch (two secondaries for each location, and each secondary should be an HA pair or cluster). All internal secondaries are configured to also forward to the internal HA forwarder discussed above, but only those queries that are not in the zones for which the secondaries are authoritative.

Internal DHCP servers should push updates to the hidden primaries.

Wrapping Up and Looking Ahead

While there will always be new DNS-related attacks on the horizon, many of today's existing attacks can be eliminated or mitigated through a well-designed DNS infrastructure that appropriately restricts access to only those who have a legitimate need for the service, and to only the data necessary for their designated role.

References

NIST also makes recommendations for DNS best practices about how to properly deploy and maintain a DNS infrastructure. For more on DNS deployment best practices, including the use of DNSSEC and TSIG, see NIST SP 800-81-2:

https://nvlpubs.nist.gov/nistpubs/SpecialPublications/NIST.SP.800-81-2.pdf

Read the SEI Blog post by Rachel Kartch Distributed Denial of Service Attacks: Four Best Practices for Prevention and Response.

More In Situational Awareness

PUBLISHED IN

Situational AwarenessGet updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedMore In Situational Awareness

Get updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed