A Taxonomy of Testing

While evaluating the test programs of numerous defense contractors, we have often observed that they are quite incomplete. For example, they typically fail to address all the relevant types of testing that should be used to (1) uncover defects (2) provide evidence concerning the quality and maturity of the system or software under test, and (3) demonstrate the readiness of the system or software for acceptance and being placed into operation. Instead, many test programs only address a relatively small subset of the total number of potentially relevant types of testing, such as unit testing, integration testing, system testing, and acceptance testing. In some cases, the missing testing types are actually performed (to some extent) but not addressed in test-related planning documents, such as test strategies, system and software test plans (STPs), and the testing sections of systems engineering management plans (SEMPs) and software development plans (SDP). In many cases, however, they are neither mentioned nor performed. This blog, post, the first in a series on the many types of testing, examines the negative consequences of not addressing all relevant testing types and introduces a taxonomy of testing types to help testing stakeholders understand--rather than overlook--them.

Through this series of blog posts, I will also challenge testing practitioners to examine and determine the degree of completeness of their

- personal testing expertise, experience, and training

- test programs and associated test planning

It's well known that different types of testing uncover different types of defects and produce different defect removal rates (DRR). Ignoring relevant types of testing can therefore increase the number of residual defects. Incomplete testing test planning is just another case of failing to plan equating to planning to fail and out of sight, out of mind.

Through our work on Independent Technical Assessments (ITAs), we also often observe that many testers and test managers lack sufficient expertise, experience, and training in these useful types of testing. This lack of knowledge is particularly problematic for project leaders, chief system/software engineers, and developers who have had little or no formal training in testing. This problem is exacerbated on Agile projects where everyone on the cross-functional development team is expected to perform all development activities, including testing. In fact, testing stakeholders are often unable to define these types of testing when prompted or are unaware that many of these types of testing even exist.

To address these problems, we developed a taxonomy that organizes roughly 200 types of testing into a structure that can help testing stakeholders understand these testing types and can use as a checklist to ensure that no important type of testing falls through the cracks.

Testing and Types of Testing

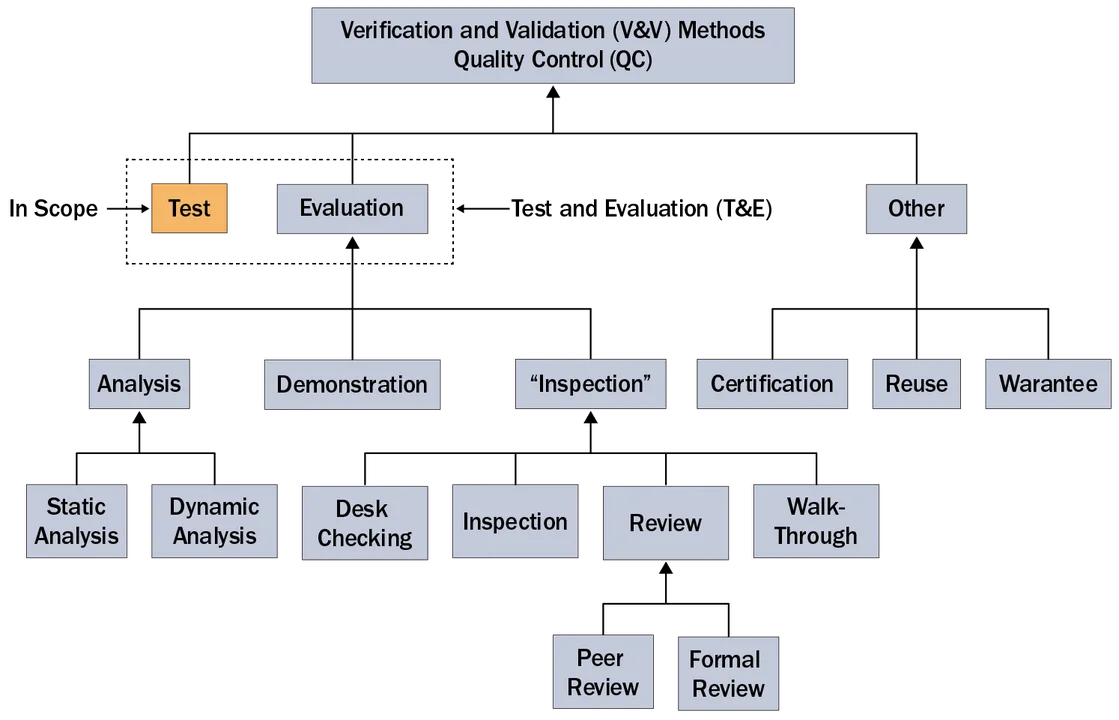

As shown in the following figure, the scope of this series of blog posts is strictly limited to testing. The non-testing aspects of Verification and Validation (V&V), Quality Control (QC), and Test and Evaluation (T&E) are thus out of scope.

Based on our experience at the SEI, many in the software development community seem to equate testing with quality assurance (QA) and confuse testing with evaluation, I will start by defining testing and types of testing before moving on to the taxonomy of testing types.

For the purpose of this blog post, I define testing as

The execution of an object under test (OUT) under specific preconditions with specific stimuli so that its actual behavior can be compared with its expected or required behavior.

In the definition above, preconditions includes

- pretest mode

- states

- stored data

- external conditions

In the definition above, stimuli includes

- calls, commands, and messages

- data inputs (data flows)

- trigger events such as state changes and temporal events

In the definition above, actual behavior includes

- data returned and exceptions raised in response to stimuli

- call, commands, and messages to other external entities

- post-conditions including post-test mode, states, stored data, or eternal conditions

Given the definition above, a type of testing is a specific way to perform testing (i.e., a class or subclass of testing).

A Taxonomy of Testing Types

This work began when it became clear just how many more types of testing existed than were commonly addressed in contractor test planning. While exploring ways to address this incomplete planning, I decided to make an initial list of testing types based on personal experience and a brief online search, including the examination of glossaries of various testing organizations, such as the International Software Testing Qualifications Board (ISTQB). I quickly realized that the software and system engineering communities were using roughly 200 test types during the development and operation of software-reliant systems, far too many to merely create an alphabetized list. Such a long list would be so large and complex that it would be overwhelming and thus of little use to testing's stakeholders. Instead, we needed a taxonomy of testing types to provide structure and to divide and conquer this complexity.

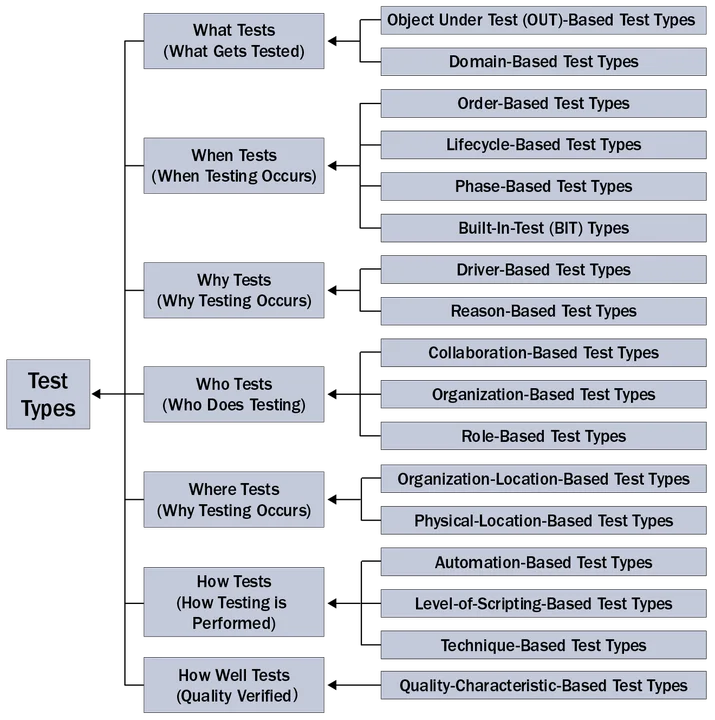

I initially created 12 general types of testing, which eventually grew to 16. One of my colleagues, however, pointed out that a better way would be to first organize them by the way they primarily answer one of the following standard sets of questions, commonly known as the 5Ws (who, what, when, where, and why), and an 2Hs (how and how well). This in turn led to the development of the following questions, which formed the foundation of my taxonomy for organizing testing types:

What are we testing?

When are we testing?

Why are we testing?

Who is performing the testing?

Where is the testing taking place?

How are we testing?

How well are the objects-under-test functioning?

The following figure clarifies the top three levels of the resulting hierarchical taxonomy of testing types.

The seven abstract classes of test types in second level of the taxonomy (middle column) classify all test types into seven subtypes that answer the corresponding 5W+2H questions. Each of these second level classes of test types is further classified into from one to three third level abstract classes of test types. As will be shown in this and future blog postings, the actual concrete classes of test types occur in the fourth, fifth, and sixth levels of the hierarchical taxonomy.

One notable characteristic of the figure above is that individual concrete testing types can (and typically do) belong to multiple third-level categories. I have therefore placed each concrete test type under the category that best describes it. The best way to look at this overlap of categories is to consider it analogous to multiple inheritance, where subclasses inherit from multiple superclasses.

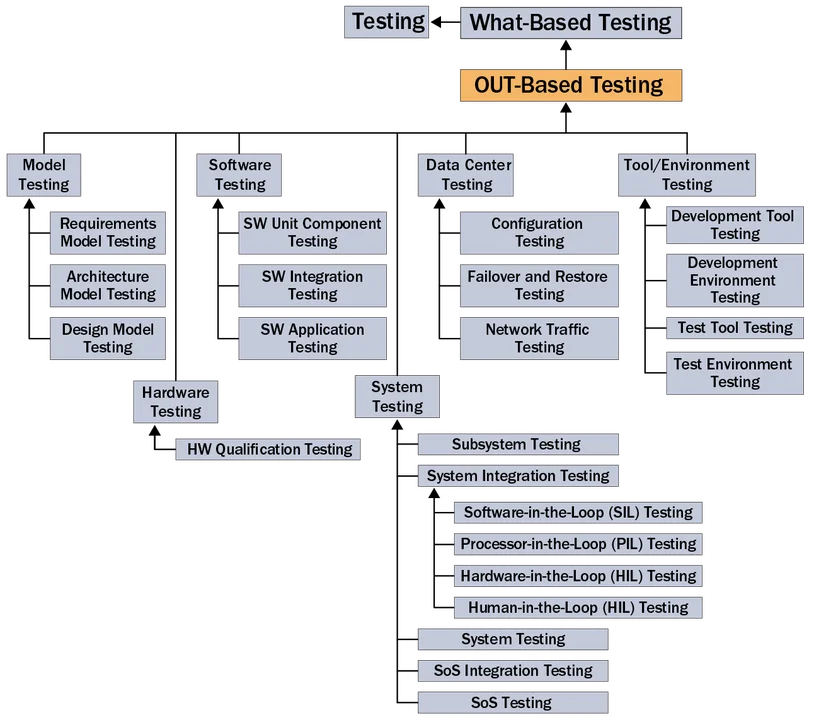

Clearly creating a single figure showing all of the hierarchical levels in the taxonomy would get overwhelming. For this reason, I created lower-level diagrams to show the classes of the fourth-level testing types. For example, the following figure shows the decomposition of the first third-level type of testing in the figure above.

Lower-level diagrams, such as the one above, finally show the actual concrete testing types being used during the development and operation of software-reliant systems. Due to the large number of testing types, the similarities between many of them, and the occasional use of synonyms within the testing community, it is important that each node in the taxonomy has an associated definition. The combination of the taxonomy and the associated definitions then becomes an ontology of testing types.

Using the Taxonomy

As briefly mentioned at the beginning of this post, the entire taxonomy of testing types can be used as a checklist to ensure that no important test type is accidentally overlooked. Those doing test planning, as well as other testing stakeholders, can essentially "spider" their way down all of branches of the taxonomy's hierarchical tree-like structure, constantly considering the questions "Is this type of testing relevant? Will using it be cost-effective? Do we have sufficient resources to do this type of testing? Should it be used across the entire system or only in certain parts of the architecture and under certain conditions?

Another way the taxonomy can help is by enabling testers to use divide and conquer as a technique to attack the size and complexity of system and software testing in terms of the different types of testing. It can help one see the similarities between related types of testing and make it easier to learn and remember the different types of testing.

Blog entries and conference presentations are too short to properly document such a rich ontology. For this reason, I am currently writing my second testing book, which is based on this taxonomy. In it, I will clearly define and discuss each testing type including its purpose, potential applicability, and subtypes.

In future blog entries in this series, I'll summarize the other major subclasses of this taxonomy.

Additional Resources

On September 9, 2015 from 12:30 to 2:30 p.m. ET, I will be presenting an SEI Webinar on A Taxonomy of Testing Types . For more information or to register for the webinar, please click here.

In the meantime, I will be presenting this taxonomy at next month's 10th Annual FAA Verification and Validation Summit and giving a more detailed tutorial on it at the SEI's 2015 Software Solutions Conference in Hilton Crystal City in Arlington, Va. on November 16-18. For more information about the conference, please click here.

I have authored several posts on testing on the SEI blog including a series on common testing problems, a post presenting variations on the V model of testing, and, most recently, shift-left testing.

More By The Author

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedGet updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed