The CERT Insider Threat Database

Hi, this is Randy Trzeciak, technical team lead for the Insider Threat Outreach & Transition group at the Insider Threat Center at CERT. Since 2001, our team has been collecting information about malicious insider activity within U.S. organizations. In each of the incidents we have collected, the insider was found guilty in a U.S. court of law.

Over the past year, our team has received feedback from practitioners on the front-line of insider threat prevention, detection, and response. This feedback shows that while malicious insider activity is a great concern, non-malicious (accidental) activity is just as problematic. Controls need to be put into place for this non-malicious activity. Additionally, we received feedback from individuals in organizations that have locations outside the U.S. They want to know how insider activity exhibited in U.S. cases compares to insider incidents in organizations outside the U.S. Based upon this feedback, we have begun collecting information about incidents involving accidental insider activity, such as accidental data disclosure, or accidental disruption of a critical service due to the unintentional actions of an employee (e.g., clicking on an infected attachment in an email message).

To date, we have collected approximately 700 cases of insider activity that resulted in the disruption of an organization's critical information technology (IT) services; the use of IT to commit fraud against an organization; the use of IT in the theft of intellectual property or national security espionage; as well as other cases where an insider used IT in a way that should have been a concern to an organization. This data provides the foundation for all of our insider threat research, our insider threat lab, insider threat assessments, workshops, exercises, and the models developed to describe how the crimes tend to evolve over time.

The following are the sources of information used to code insider threat cases:

- Public sources of information

- Media reports

- Court documents

- Publications

- Non-public sources of information

- Law enforcement investigations

- Organization investigations

- Interviews with victim organizations

- Interviews with convicted insiders

Below are the descriptions of the types of information we collect about each incident. These descriptions should provide some insight into how we use the information for analysis and for drawing conclusions about potentially problematic insider activity.

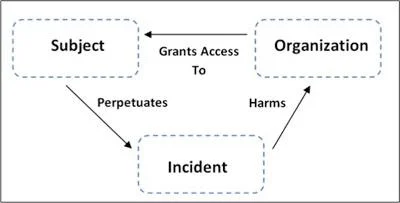

Information about three entities is needed when coding insider threat cases: the organization(s) involved, the individual perpetrator (subject), and the details of the incident. The figure below shows the primary relationships among these three entities.

Organization Data

Multiple organizations can be involved in a single incident. An organization that is negatively impacted by an incident is designated as a "victim organization." Incidents may also involve a victim organization's trusted business partner.

Organization Attributes

| Organization Subcategory | Attributes |

| Organization descriptors | Name, address, relation to insider |

| Organization type | Victim, beneficiary, other |

| Organization description | Description of the organization, including the industry sector of the organization. |

| Based in the U.S. | Location of the organization; based in the United States |

| Organization issues | Work environment; layoffs, mergers, acquisitions, and other workplace events that may have contributed to an insider's decision to act. |

| Opportunity provided to insider | Actions taken by organization that may have contributed to the insider's decision to take action, or failure by the organization to take action when observables were available. |

Subject Data

Details about an insider may be limited, especially in cases involving sensitive information or those where the insider is not prosecuted. Whenever possible, we collect demographic information about the insider, which can be used to generate insider profiles and incident statistics.

Subject Attributes

| Subject Subcategory | Attributes |

| Descriptors | Name, gender, age, citizenship, residence, education, employee title/type/status, departure date, tenure, partner relationship, access, position |

| Motives and expectations | Motives (financial, curiosity, ideology, recognition, external benefit), unmet expectation (promotion, workload, financial, usage) |

| Concerning behaviors | Tardiness, insubordination, absences, complaints, drug/alcohol abuse, disgruntlement, coworker/supervisor conflict, violence, harassment, poor performance, poor hygiene, etc... |

| Violation history | Security violations, resource misuse, complaints, background deception |

| Consequences | Reprimands, transfers, demotion, HR report, termination, suspension, access revocation, counseling |

| Mental history | Evaluated, delusional, treatment, depression, psychiatric diagnosis/medication, suicidal, violence |

| Substance abuse | Alcohol, hallucinogens, marijuana, amphetamines, cocaine, sedatives, heroin, inhalants |

| Planning and deception | Prior planning activities, explicit deceptions |

Incident Data

Information about a specific incident is collected to describe individual actions taken to set up the attack, vulnerabilities exploited during the attack, steps taken to conceal the attack, how the incident was detected, and the impact the attack had on the victim organization. When available, data is collected on actions taken by the organization in response to the actions, events, and conditions that may have contributed to an insider's decision to carry out an attack.

Incident Attributes

| Incident Subcategory | Attributes |

| Case summary | Incident dates, duration, critical infrastructure sector, prosecution |

| Conspirators | Accomplice identifier, type of collusion, relationships to insider |

| Information sources | Origination, type |

| Incident chronology | Sequence of date, place, event |

| Investigation and capture | How identified and caught |

| Case outcome | Indictment, subject's story, sentence, case outcome |

| Recruitment | Outside/competitor induced, insider collusion, outsider collusion, acted alone, reasons for collusion |

| IT accounts used | Subject's, organizations', system administrators', database administrators', co-workers', authorized third party, shared, backdoor |

| Outcome | Data copied/deleted/read/modified/created/disclosed, used in ID theft, unauthorized document created, system blocked |

| Impact | Description, financial |

| How detected | Software, information system, audit, nontechnical, system failure |

| Who detected | Self reported, IT staff, other internal; customer, law enforcement, competitor, other external |

| Log files used | System files, email, remote access, ISP |

| Who responded | Incident response team, management, other internal |

| Vulnerabilities exploited | Sequence of exploit description, vulnerability grouping |

| Technical methods | Technical methods used to set up and/or carry out the attack (e.g., hardware device, malicious code, modified logs, compromised account, sabotaged backups, modified backups) |

| Concealment methods | Concealment methods used to hide technical and non-technical methods |

We hope you found this entry helpful in understanding the type of data the CERT Insider Threat Center collects, analyzes, and uses. If you have any questions or comments related to our coding process, email us using the feedback link.

More By The Authors

More In Insider Threat

PUBLISHED IN

Get updates on our latest work.

Sign up to have the latest post sent to your inbox weekly.

Subscribe Get our RSS feedMore In Insider Threat

Get updates on our latest work.

Each week, our researchers write about the latest in software engineering, cybersecurity and artificial intelligence. Sign up to get the latest post sent to your inbox the day it's published.

Subscribe Get our RSS feed